Eduardo Brito Alarcon — HTGAA Spring 2026

About me

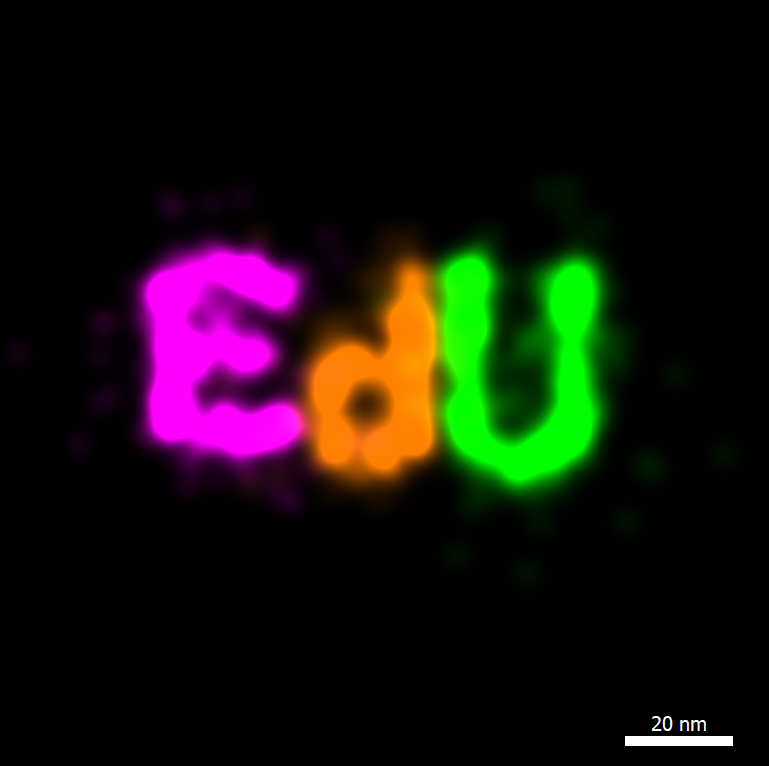

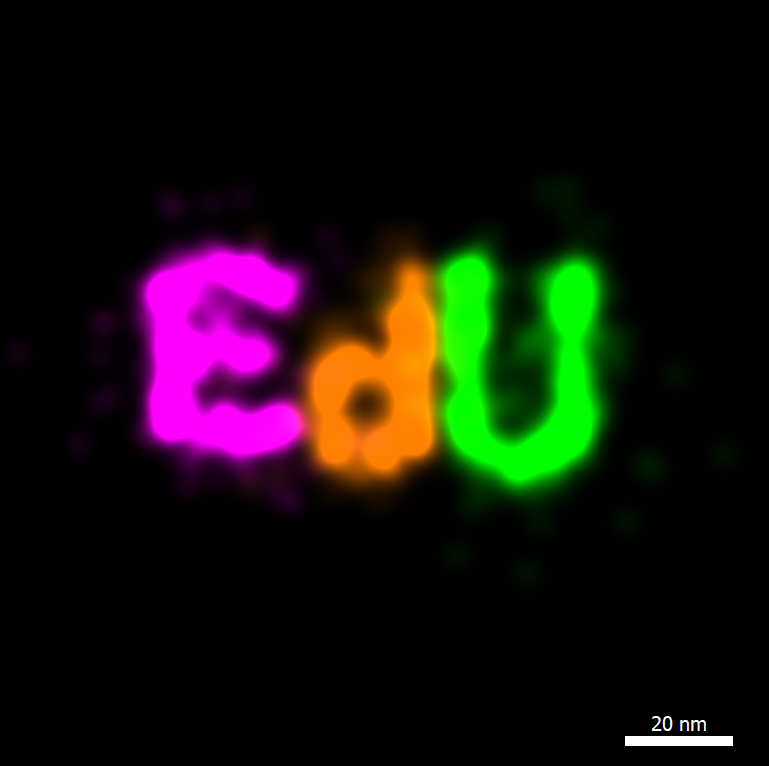

Just a someone passionate for nanoscopy imaging of biomolecules.

Just a someone passionate for nanoscopy imaging of biomolecules.

Week 1 HW: Principles and Practices

Sorry for the bad format. I need to hurry for a medical appointment and I started as an onlyoffice file.

Week 2 HW: DNA -> Read, Write and Edit

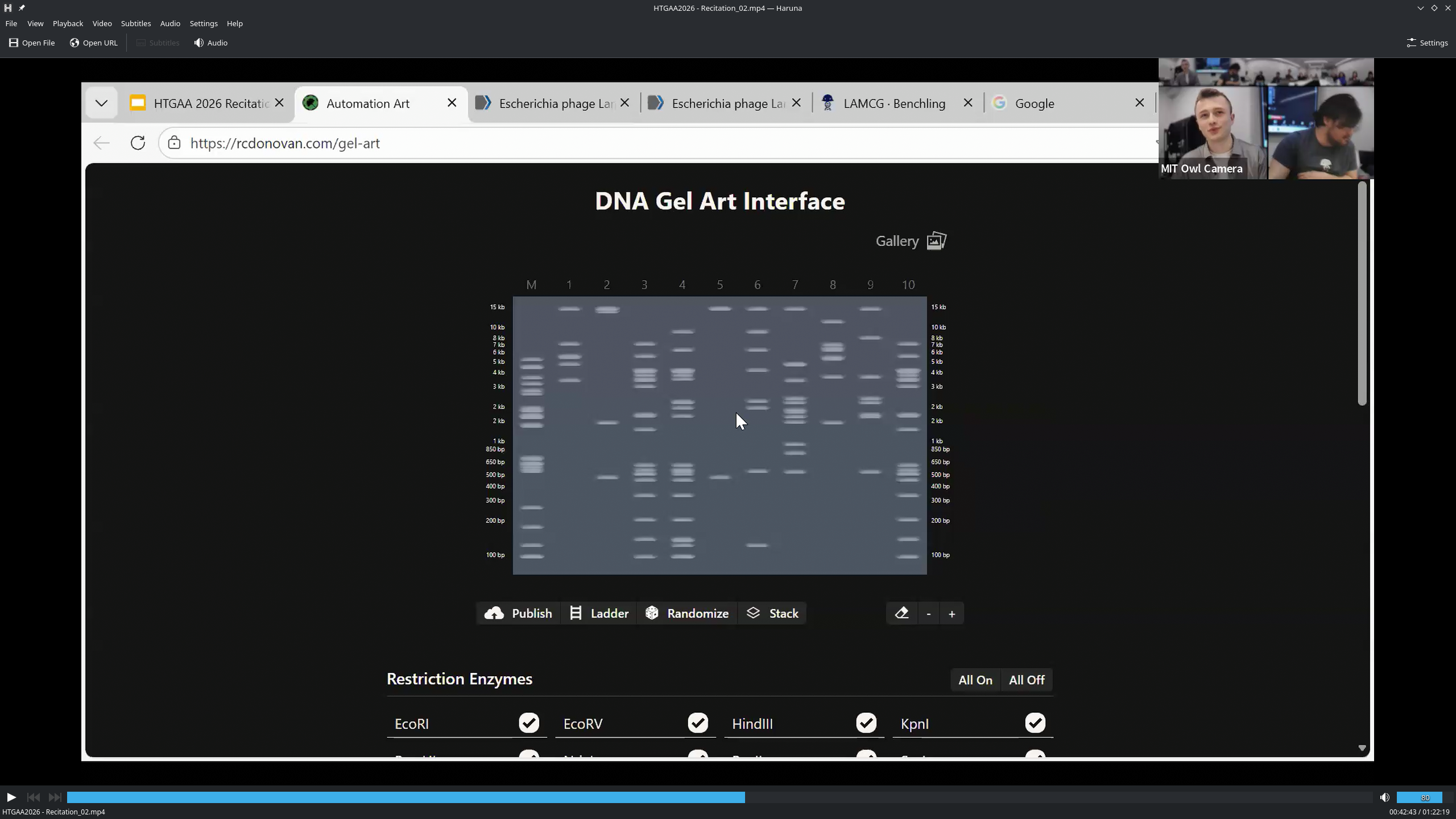

Part 0: Basics of Gel Electrophoresis

Sorry for the bad format. I need to hurry for a medical appointment and I started as an onlyoffice file.

Why it is important or risky: This autonomous cell-free expression of viral supra-molecular assemblies push the virology field research and the protein design of proteins, however it can, in principle be used to expl;ore permutations with biological risk (toxins, pathogens) in an automatic and distribution system.

Objectives of governance. Main objective: Promote the beneficial research (Prevention of Harm) and promote equitable, Beneficial Innovation in the use of autonomous expression platforms. Sub-goal 1 (Biosecurity/Biosafety): Prevent the synthesis of known or predicted high-risk viral protein variantes through real-time, integrated control mechanisms. Sub-goal 2 (Transparency & Equity): Ensure the ecosystem for this powerful technology remains auditable,inclusive, and resistant to monopolization—preventing a scenario where only opaque or malicious actors control its advanced capabilities.

Proposed Governance Actions (Evaluated in Four Aspects) Option 1: Mandatory Federal Licensing for High-Level Autonomous Systems (Regulatory Action) · Purpose: Shift from institutional biosafety committees (IBCs) overseeing static research to a federal license requirement for operating any autonomous platform capable of iterative synthesis and testing without human-performed critical checks. Analogous to Select Agent regulations, but focused on the level of autonomy. · Design: Agencies (e.g. CDC, FBI WMD Directorate) define autonomy thresholds. Actors (academic labs, companies) must apply for a license, demonstrating integrated containment (physical, like a ‘robot-in-a-vault’; and digital, like sequence screening software). Required periodic inspections. · Assumptions: A clear, technical threshold for ‘high-risk autonomy’ can be defined; regulators can build relevant technical assessment capacity; the licensing burden won’t stifle beneficial, time-sensitive research. · Risks of Failure & ‘Success’: Failure: Drives development underground or to jurisdictions without such rules, creating and uncontrolled market. ‘Success”: Concentrates technology in large, well-funded institutions, stifling distributed innovation, creating single points of intitutional failure, and exacerbating global inequities in access.

Option 2: Open-Source ‘Pre-Synthesis Peer Varification’ Standard (Community Led Action)

Option 3: International Subsidiozed Biosecurity Auditing Corps (Multilateral Action)

Scoring: 1 = Strongly Positive/Best for this goal 2 = Neutral/Mixed 3 = Negative/Poor for this goal

| Does the option: | Op1: Mandatory Licence | Op2:Open-Source Standard | Op3: Audit Corps |

|---|---|---|---|

| Enhance Biosecurity/Biosafety | |||

| • By preventing incidents | 1 | 2 | 2 |

| • By helping respond (tradeability, containment) | 1 | 2 | 1 |

| Promote Transparency & Equity | |||

| • By preventing monopolies/opacity | 3 | 1 | 2 |

| • By ensuring inclusive access | 3 | 1 | 1 |

| Other Key Considerations | |||

| • Minimize burdens on legitimate research | 3 | 2 | 2 |

| • Feasibility & Political Viability | 2 | 1 | 1 |

| • Not impede benefical research | 3 | 1 | 1 |

Prioritized Recommendation & Rationale To: The Director of the NIH Office of Science Policy and the Leadership of the Engineering Biology Research Consortium (EBRC)

I recommend prioritizing the development and implementation of Option 2 (Open-Source Verification Standard) as the core framework, actively supported and scaled by Option 3 (International Audits Corps).

Why this combination? Option 2 builds the essential technical and cultural infrastructure for transparency from within the research comunity itself. It is the most viable path to creating a ‘safety-by-design-’ norm that is agile, embraced by users, and keeps pace with innovation. However, to ensure glboal equity and robust adoption, it needs the support mechanism of Option 3. The Audit Corps would provide hands-on assistance for labs (especially in under-) resourced settings) to implement the standard effectively, validate their systems, and build trust. This combination fosters a globally inclusive safety culture rather than a restrictive gatekeeping regime.

Trade-off Acknowledged: We are explicity choosing board adoption and embedded safety culture (Options 2+3) over strict, centralized control (Option 1). We accept a margianlly higher theorical risk of a bad actor avoiding the system, in exchange for bringing the vast majority of the global research community into a transparent, collaborative, and peer-verified operating environment. This makes anomalous, proteintially dangerous activity more detectable.

Criticial Assumption & Uncertainty: This model assumes a majority of researchers and institutions are nherently motivated by safety reputation. A key uncertainty is its resilience against state-level or well-funded corporate actors pursuing dual-use or clandestine applications outside the community-based framework.

Proposed Governance Actions to Address this Issue: Any governance sttard for autonomous systems (like Option 2) should mandate a ‘Human-in-the-loop for Critical Thresholds’ protocol. For experiments crossing a pre-defined threshold of complexity or predicted risk, the system must pause and present the human operator with digestible risk assessment and require explicit manual approval for that specific stage. This maintains human moral agency and final jundgment without sacrificing the efficiency of automation for routing tasks. This should be a key criterion for audits under option 3.

Part 0: Basics of Gel Electrophoresis