Week 1 HW: Principles and Practices

- First, describe a biological engineering application or tool you want to develop and why. This could be inspired by an idea for your HTGAA class project and/or something for which you are already doing in your research, or something you are just curious about.

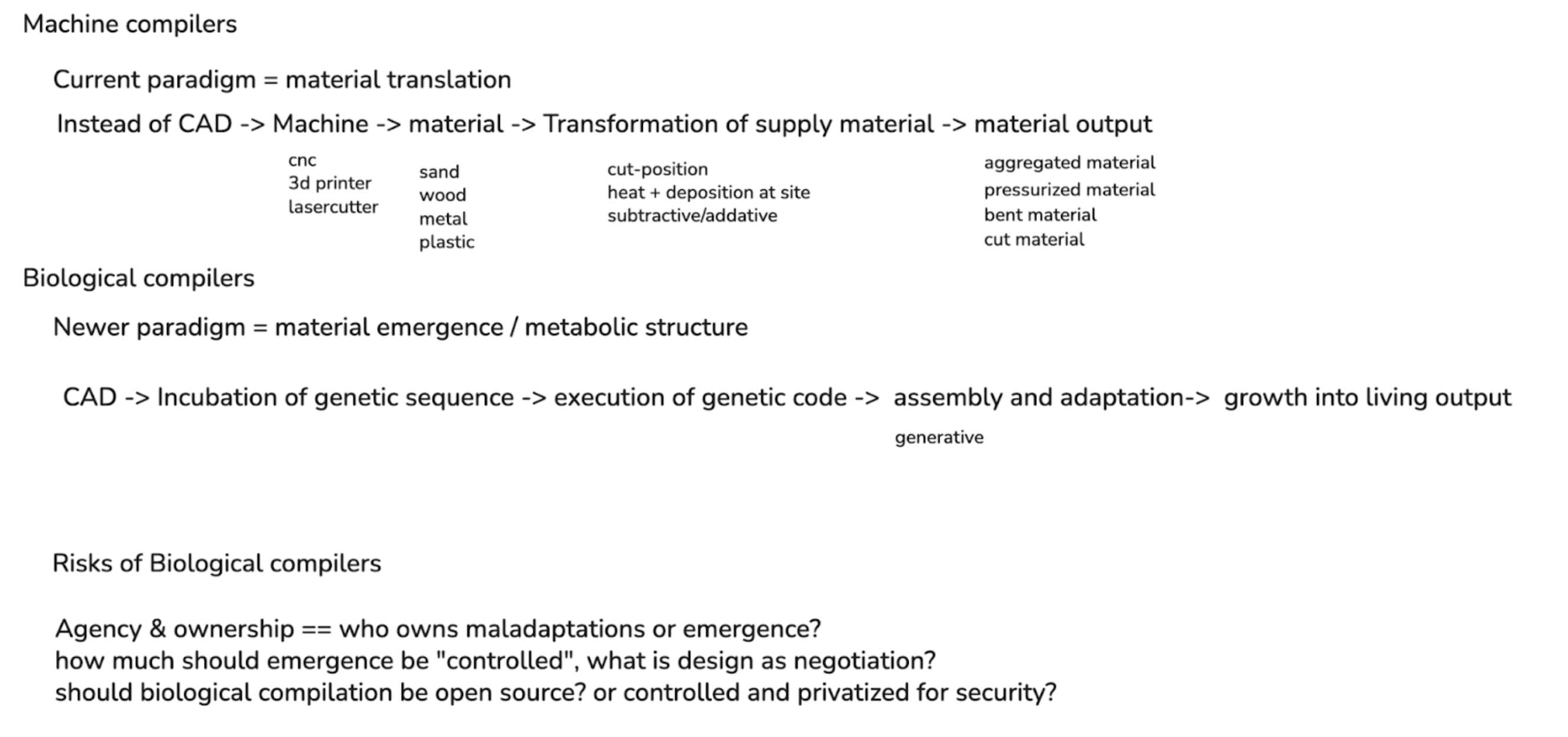

Currently, I develop software that creates new paradigms of computer-aided design (CAD) for systems that don’t fit conventional models of making. For example, most current mainstream CAD applications rely on drawing and volumetric representation as the main mechanism of formal shape creation. However, with newer fabrication systems such as robotic printing, zero-gravity printing, pigment printing, and other digital manufacturing advances, the tooling for operating this hardware lags behind the creation potential of these machines.

While I am a beginner to the synthetic biology space, I see a similar ceiling imposed on the field based on tooling efficacy, with newer tooling like AlphaFold helping to exponentially open the potential of the space via computation. While there are existing “CAD” systems around protein design, there seems to remain a challenge in scaling these biological systems into a formalized design process.

I’m specifically interested in how humans can become part of the emergence of a living system, and how design as a field can change from the mode of human “control” into one of human “negotiation”. When designing with and alongside a living system, the criteria for design change. While gene editing offers significant potential in designing behavior, it also requires considering design over time. The design process extends beyond initial creation into growth: how the cells grow, adapt, and assemble into larger aggregations of structure.

This approach becomes more similar to designing cellular automata at scale, working alongside a living network that can push and pull with your own design goals. This change in theory orients towards design-in-emergence, in which the results of a design-driven process are left open for adaptation of the living system to accommodate.

At the same time, there should still be some intentional design process on the part of the researcher. Rather than relying on chance, computation enables an exploration of design possibilities that translate human intention into instructions that are legible to natural systems through genome editing. This act of “compilation” creates a bridge between high-level, human-authored structures such as a structural lattice into genetic sequences that guide the formation of a system through biological growth processes.

This project idea would look at the potential of biological compilation, i.e. a biocompiler, as an instrument for translating code into living systems, and examining the potential of a metabolic and emergent design process through morphogenesis. Similar to how 3D printing required new file formats and GCODE to translate digital models into machine instructions, biological compilers need new intermediate representations between parametric design and organic genome sequences. I will have to dive more deeply into the technical application of this further in the course, as to what a concrete initial prototype could be for this system of a metabolic and living design process.

- Next, describe one or more governance/policy goals related to ensuring that this application or tool contributes to an “ethical” future, like ensuring non-malfeasance (preventing harm). Break big goals down into two or more specific sub-goals. Below is one example framework (developed in the context of synthetic genomics) you can choose to use or adapt, or you can develop your own. The example was developed to consider policy goals of ensuring safety and security, alongside other goals, like promoting constructive uses, but you could propose other goals for example, those relating to equity or autonomy.

The governance issues relating to the ethical future of biological computation that interest me most are centered on agency and ownership. As fabricated living systems become more adaptive, and as an increasing number of individuals “code” life into being, responsibility becomes increasingly blurred.

1. Accountability and agency

Who owns, or is responsible for, maladaptations that arise through emergent biological processes? If a system’s behavior is not fully specified in advance, how should responsibility be assigned when outcomes diverge from intent?

Goal: Create an accountability framework for emergent biocompiled systems.

- Sub-goal A: Define liability when organisms deviate from designed behavior through mutation or adaptation.

- Sub-goal B: Create certification or validation standards for genetic-to-material compilation accuracy and traceability.

2. Oversight and constraint

To what degree is oversight necessary for generative biological systems? While strict guardrails or regulatory constraints may be required to prevent harm or misuse, imposing such limits may also reduce the adaptive and generative potential that makes these systems valuable in the first place.

Goal: Develop oversight mechanisms that balance safety with generative capacity

- Sub-goal A: Define operational thresholds at which intervention, shutdown, or containment is required.

- Sub-goal B: Define in which safety conditions experimentation would be freely allowed.

3. Ownership and access

Should biological compilers be open source and publicly accessible, or privatized and restricted to limit potential harm? While privatization may reduce risk, it also concentrates power and may limit broader participation and positive impact. On the other hand, open access would increase the democratic possibility of the technology but also may amplify its misuse.

Goal: Balance access and control of biological compiler technologies

- Sub-goal A: Ensure equitable access to foundational tools and prevent monopolization of biological design infrastructure.

- Sub-goal B: Implement access controls or licensing models that preserve research and creative use

Next, describe at least three different potential governance “actions” by considering the four aspects below (Purpose, Design, Assumptions, Risks of Failure & “Success”). Try to outline a mix of actions (e.g. a new requirement/rule, incentive, or technical strategy) pursued by different “actors” (e.g. academic researchers, companies, federal regulators, law enforcement, etc). Draw upon your existing knowledge and a little additional digging, and feel free to use analogies to other domains (e.g. 3D printing, drones, financial systems, etc.).

- Purpose: What is done now and what changes are you proposing?

- I will focus on what is done now in the computation space around robotics, because I have more knowledge of it compared to synthetic biology and it has many parallels to a living system.

- [Training] While behavior is trained through data, the process of “digital twin” systems is a testing ground for robotic code to learn and adapt in a simulated environment, rather than making costly mistakes in a physical environment.

- [Validation] Rather than assume good intent, there is a large need for “red teaming” to try to initiate unwanted behavior from the machine, so as to prevent that behavior from happening in a real environment.

- [Deployment] Deployment is restricted to specific zones with clear partnership with local governance areas, [ie Waymo in SF], and has systems for overriding control.

- [Access] Access is usually locked down so low-level systems are unable to be modified upon, and only high-level instructions can be inputted so as to not override training behavior and rule logistics.

- Based on these precedents, I propose that biological compilation systems:

- adopt a governance framework that requires simulated pre-deployment of any experimentation and testing,

- perform adversarial tests against maladaptations and unwanted emergent behaviors,

- require clearly defined deployment areas with actor-approved governance, and

- restrict user-behavior to clear modes of approved interaction mechanisms, and have clear lock-out mechanisms.

- Design: What is needed to make it “work”? (including the actor(s) involved - who must opt-in, fund, approve, or implement, etc)

- To make this work, it has to be required for operation to occur.

- Money & Publishing: Funding sources (government, nonprofits, companies) and publishers (journals, conferences) must require proper compliance across above conditions for the work to go through.

- Regulatory Bodies: International law and local laws must be upheld with biocompilation, and such governance bodies must be created and maintained so as to not fall behind the pace of the emergent technology.

- Labs and Developers: Must implement the proper testing, validation, and deployment practices rather than try to fasttrack the work. Licensing must be held to, and implemented correctly.

- To make this work, it has to be required for operation to occur.

- Assumptions: What could you have wrong (incorrect assumptions, uncertainties)?

- This might already be a solved problem

- There is significant enough differences between robotics and synthetic biology that their safety-deployment pipelines should actually not be similar

- Organizations might keep things “in-house” rather than going public with information to limit competition and not require proper regulation.

- Risks of Failure & “Success”: How might this fail, including any unintended consequences of the “success” of your proposed actions?

- The safety pipeline does not consider system goals. For example, if the intended behavior of the original designer is to create a fully autonomous living being, the biocompiler system does not effectively safeguard against intent of goal with the designer. This is a similar risk in robotics and coding development (to what purpose is AI technology developed?).

- The unintentional success of a biocompilation system could result in an ignorance of the required supplies needed for the procurement of such a system. For example in software, the abstraction of the “cloud” in compute services fully hides the environmental cost of digital systems through datacenters. A similar result could happen through a biocompiler system, where there no longer is a need to worry about the technical costs of acquiring certain cells and their modification.

- Purpose: What is done now and what changes are you proposing?

Next, score (from 1-3 with, 1 as the best, or n/a) each of your governance actions against your rubric of policy goals. The following is one framework but feel free to make your own:

| Does the option: | Simulation-Based Training Requirements | Adversarial testing | Constrained Deployment, with Override | Access Control |

|---|---|---|---|---|

| Accountability & Agency | 1 | 2 | 2 | 1 |

| • Liability for maladaptation | 2 | 1 | 2 | 3 |

| • Traceability / certification | 1 | 2 | 2 | 3 |

| 2. Oversight & Constraint | 2 | 2 | 1 | 3 |

| • Intervention / shutdown thresholds | 3 | 2 | 1 | 2 |

| • Conditions for free experimentation | 2 | 2 | 3 | 1 |

| Ownership & Access | 3 | 2 | 2 | 1 |

| • Prevent monopolization | 3 | 2 | 2 | 1 |

| • Preserve research & creative use | 3 | 2 | 2 | 1 |

| Other considerations | ||||

| • Protecting the environment | 1 | 2 | 3 | n/a |

| • Protecting living systems | 1 | 2 | 3 | n/a |

| • Feasibility | 2 | 2 | 3 | 1 |

- Last, drawing upon this scoring, describe which governance option, or combination of options, you would prioritize, and why. Outline any trade-offs you considered as well as assumptions and uncertainties. For this, you can choose one or more relevant audiences for your recommendation, which could range from the very local (e.g. to MIT leadership or Cambridge Mayoral Office) to the national (e.g. to President Biden or the head of a Federal Agency) to the international (e.g. to the United Nations Office of the Secretary-General, or the leadership of a multinational firm or industry consortia). These could also be one of the “actor” groups in your matrix.

My governance actions I designed as part of a process/pipeline, so I think they are all equally necessary to ensure proper deployment of biocompiled code. My main tradeoffs I considered were from what I’ve seen in the software space over the past decade, of the tradeoff between moving quickly to get new technology out (often seen in big tech and startups), compared to the more rigorous and contextual approach of academia with computer science. Citizen scientists and open source developers often sit in this in-between of the two worlds, and so I have them primarily in mind when designing these governance actions and constraints. I imagined it as something similar to `git` for biotech, which allows for control, revision, deployment, testing, and access baked into its functionality (ie CI/CD pipelines).

Reflecting on what you learned and did in class this week, outline any ethical concerns that arose, especially any that were new to you. Then propose any governance actions you think might be appropriate to address those issues. This should be included on your class page for this week.

As a committed listener, my main ethical concern I’m faced with after week one is “who am I to learn such skills”. Synthetic biology from the perspective of a newcomer feels akin to taking on a god-like power, and as such I don’t feel nearly qualified to take on these tools. Considering the ethical and moral potential of taking on such power feels nearly insurmountable. Thus, my governance action to respond to this feeling is if there should be something similar to licensure for this technology. Similar to how lawyers need to pass the bar to perform proper legal work, should biotechnologists have to pass a similar certification program? Does one already exist?