Week 1 HW: Principles and Practices

![cover image]()

![cover image]()

Question Responses

I am highly interested in biologically engineering a type of bacterial that efficiently consumes plastics like polyethylene terephthalate (PET) and converts them to harmless byproducts. I am aware that there already exists some form naturally growing bacteria the does this, however, it is not scalable for large-scale goals. The reason for my interest in engineering such type of bacteria is to at least mitigate the harmful effects of hundreds of millions of tons of plastics that are thrown each year in oceans and landfills, destroying the biosphere in these places and causing some global effects.

The main policy to make is to ensure the saftey of this large scale operation by first mandating thorough lab tests and closed containment trials prior to releasing it to the open environment. Secondly, design the bacteria with a built-in safeguard as a kill-switch and hand it to a head comitte as a third party that is its own seperate entity and is resposibile for monitoring the status of the bacteria and initiating the kill-switch when it is deemed necessary.

Governance Action 1: Mandatory Genetic Safeguards for Plastic-Degrading Bacteria

Actor(s): Academic researchers, biotech companies, funding agencies, regulators

Purpose:

This action would require all engineered plastic-eating bacteria to include at least one validated containment mechanism before research funding, publication, or deployment.

Design:

Funding agencies will require safeguard documentation in grant proposals. Institutional Biosafety Committees then verify compliance before approval. Journals require disclosure of safeguards as a condition of publication.

Assumptions: We assume that genetic safeguards remain stable over time and that researchers can implement safeguards without major performance loss.

Risks of Failure & Success: The proposed safeguards could be lost through mutations or selective pressure and there would be no way of stopping the bacteria.

Governance Action 2: Incentives for Use in Controlled Waste-Processing Facilities

Actor(s): Governments, industry, environmental agencies

Purpose:

This action shifts deployment towards a more controlled settings rather than open ecosystems.

Design:

Governments offer tax credits or grants for bioreactor-based plastic degradation. Facilities must meet containment and disposal standards, and the environmental agencies will oversee compliance and inspections.

Assumptions:

We assume that controlled facilities can scale plastic degradation effectively and that it is feasable to provide finantial incentives to drive the growth of this industry.

Risks of Failure & Success:

High infrastructure costs may limit participation. And usually, countries who have a big issue with landfills, cannot sustain to give incentives at a large scale.

Governance Action 3: Phased Environmental Release Approval Process

Actor(s): Federal regulators, environmental agencies, researchers

Purpose:

This action introduces a standardized, stepwise approval process.

Design:

Sequential stages: lab testing, then contained field trials, then limited environmental release. Progression requires independent ecological risk review. Long-term monitoring required for post-deployment.

Assumptions: We assume that short-term trials can predict long-term ecological effects, and that regulators have sufficient expertise and resources and there are no unpredictable consequences.

Risks of Failure & Success:

Long-term impacts may emerge after approval. Lengthy approval timelines could delay urgent environmental interventions.

| Does the option: | Option 1 | Option 2 | Option 3 |

|---|

| Enhance Biosecurity | 1 | 2 | 2 |

| • By preventing incidents | 1 | 1 | 2 |

| • By helping respond | 1 | 2 | 2 |

| Foster Lab Safety | 1 | 2 | 2 |

| • By preventing incident | 1 | 3 | 2 |

| • By helping respond | 2 | 2 | 2 |

| Protect the environment | 2 | 1 | 1 |

| • By preventing incidents | 2 | 1 | 1 |

| • By helping respond | 3 | 2 | 1 |

| Other considerations | | | |

| • Minimizing costs and burdens to stakeholders | 3 | 2 | 3 |

| • Feasibility? | 1 | 3 | 2 |

| • Not impede research | 1 | 1 | 3 |

| • Promote constructive applications | 1 | 1 | 3 |

- I would priortise the 3rd option of staged testing. While, it’s true that the other options are still important and play a role in the feasability of this project. However, this is the only measure that is impelented in the environment and can test the safety of the bacterial and quickly stop it through measures that were prepared prior to thr testing. Because the engineered safeguard could be mutated and stop working causing the bacteria to go rogue, so this is by far the safest and most realistic option that allows us to predict the safety of the bacteria. This recommendation is most relevant for national environmental regulators, such as the Environmental Protection Agency (EPA), because they have the authority and infrastructure to evaluate ecological risk and enforce compliance. Federal funding agencies should also support this framework by requiring phased testing as a condition of research support. The biggest tradeoff to this approach is that a staged approval process may slow deployment. Applying these measures when this industry is still new would harm the opportunity for rapid growth of this industry and severly limit participation due to the tedious required tests. The approach also requires significant regulatory resources and scientific expertise, which may not be equally available across countries. Additionally, since this is practically a new field, there will always be uncertainties about the safety even after tests, because no one witnessed a project of this scale before, so it’s unpredictable especially at first and there has to be long term tests continually done even after the release of the bacterial into the environment to not be blindsighted by something harmful.

One ethical concern that became more apparent this week is the unpredictability of releasing engineered organisms into natural ecosystems. Even when designed for environmental benefit, plastic-eating bacteria could disrupt microbial communities, transfer genes to other organisms, or evolve in unintended ways. Another issue is governance consistency. Regulations for environmental biotechnology vary widely across regions, raising the possibility that research or deployment could shift to areas with weaker oversight. This creates uneven safety standards and increases the chance of poorly monitored releases. To address these concerns, several governance actions would be appropriate. First, regulators should require phased testing before environmental release so that risks can be evaluated in controlled stages. Second, mandatory genetic containment mechanisms should be incorporated into engineered organisms to reduce the likelihood of uncontrolled spread. Third, governments and research institutions should establish standardized reporting systems for environmental incidents to ensure rapid response and shared learning. Finally, international coordination on biotechnology guidelines would help promote consistent safety expectations and reduce regulatory gaps.

HTGAA Website Assignment

I personalized my website page, added an image for me, filled a brief describtion of myself, and added my email in my contact info. I learned how to use and properly edit my HTGAA website pages through trial and error.

Week 2 Lecture HW: DNA Read Write and Edit

Pre-lecture 2 homework questions:

Question 1: What is the error rate of polymerase? How does this compare to the length of the human genome. How does biology deal with that discrepancy?

Response: The error rate of DNA polymerase varies between 1 in 104 to 1 in 105. The length of the human genome is 3 * 109 base pairs, this would mean hundereds of mutations every cell division. However, biology dealt with that discrepancy through proofreading, which decreases the error rate to about 1 in 107, along with other correcting methods like mismatch repair, DNA damage checkpoints, and if there is still severe mutation detected, the cell will go into apoptosis.

Question 2: How many different ways are there to code (DNA nucleotide code) for an average human protein? In practice what are some of the reasons that all of these different codes don’t work to code for the protein of interest?

Response: The genetic code is degenerate, meaning multiple codons can encode the same amino acid. With 61 codons specifying 20 amino acids, each amino acid is encoded by about three codons on average. For a typical human protein of roughly 400 amino acids, this results in approximately 3400 (about 10190) possible DNA sequences that could produce the same protein. In practice, many of these sequences do not function effectively. Cells exhibit codon bias, preferring certain codons because the corresponding tRNAs are more abundant, which improves translation efficiency. Some sequences also create mRNA structures that hinder ribosome movement or reduce stability, leading to lower protein production. also, specific nucleotide patterns can unintentionally introduce regulatory signals such as premature stop cues or splice sites. For these reasons, although many DNA sequences are theoretically possible, only a subset will reliably generate the desired protein within a biological system.

Questions from Dr. LeProust:

- Most commonly used oligo synthesis method?

response: The most commonly used oligonucleotide synthesis method is solid-phase phosphoramidite synthesis.

- Why it’s hard to make oligos >200 nt directly?

Response: It is difficult to synthesize oligos longer than about 200 nucleotides because each nucleotide addition is not perfectly efficient. Even with ~99% coupling efficiency, small errors accumulate at every step, causing many strands to be truncated or contain mistakes by the end of the process. Longer sequences also become harder to chemically handle and purify, reducing overall yield and quality.

- Why you can’t make a 2000 bp gene by direct oligo synthesis?

Response: A 2000 bp gene cannot be made directly through oligo synthesis because the stepwise chemical process introduces small errors during each nucleotide addition. As the sequence length increases, these errors accumulate, leading to a very low proportion of full-length, correct DNA strands. The resulting mixture would contain many truncated or incorrect sequences, making purification impractical. Instead, long genes are typically assembled from shorter, high-quality oligos using methods such as PCR or gene assembly.

Question from Dr. George Church:

What are the 10 essential amino acids in all animals and how does this affect your view of the “Lysine Contingency”?

Response: The ten essential amino acids in animals are histidine, isoleucine, leucine, lysine, methionine, phenylalanine, threonine, tryptophan, valine, and arginine (arginine is especially essential during growth). They are considered essential because animals cannot synthesize them in sufficient amounts and must obtain them through diet. This weakens the idea behind a “lysine contingency,” which proposes engineering organisms to depend on lysine so they cannot survive outside controlled environments. Since lysine is already common in many natural environments and diets, relying on it alone would not provide strong containment. An organism could potentially obtain lysine from surrounding biological material, making the safeguard less reliable. Effective biocontainment typically requires dependencies on nutrients that are rare or entirely synthetic rather than widely available amino acids.

Week 2 Homework

Part 1:

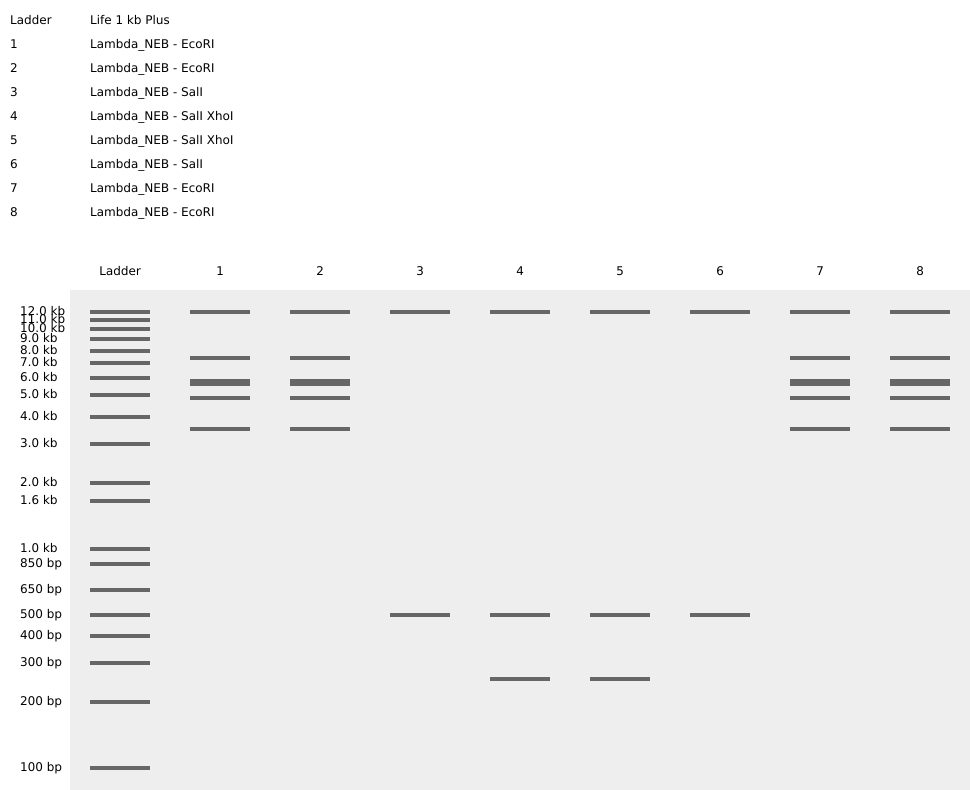

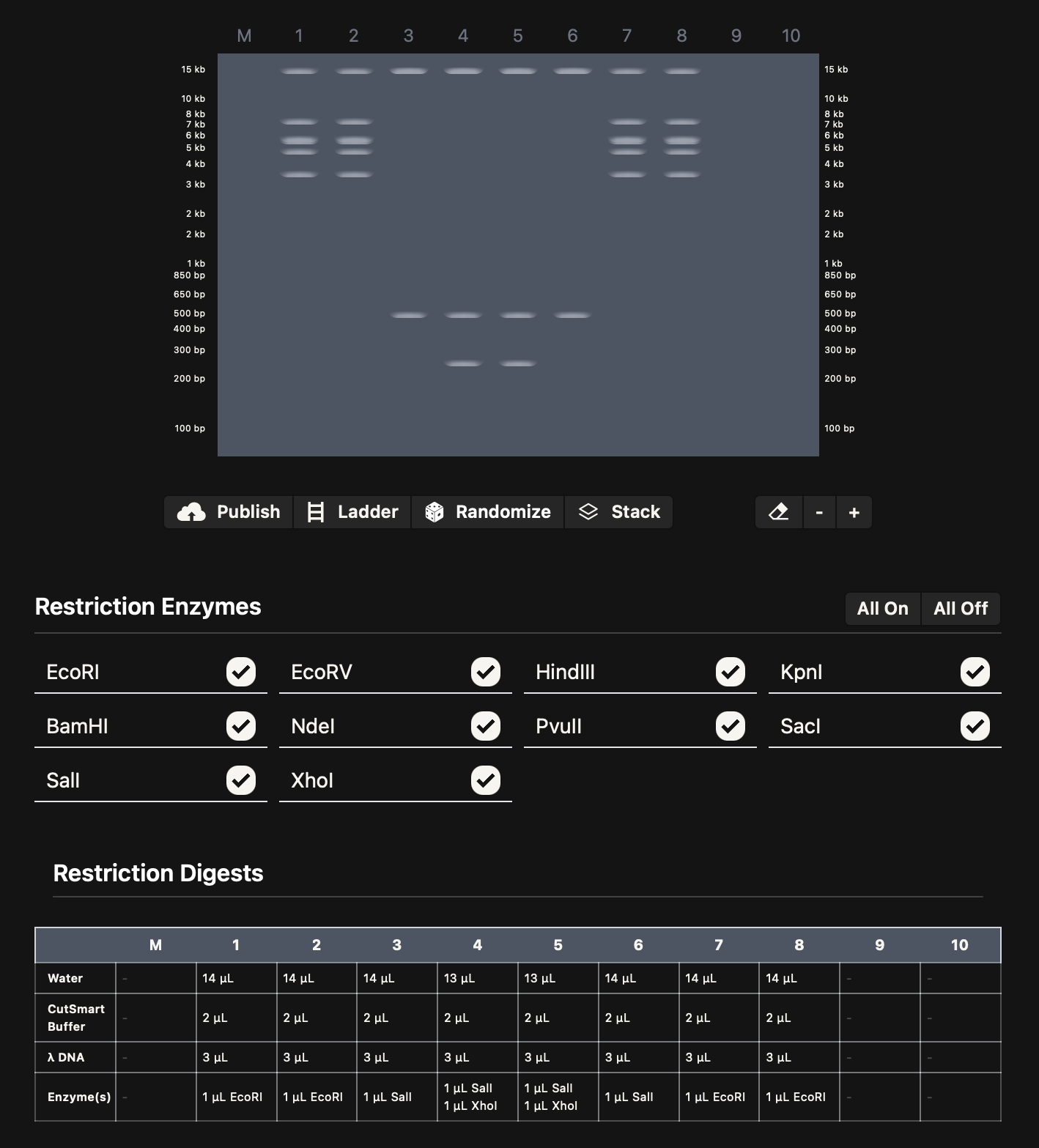

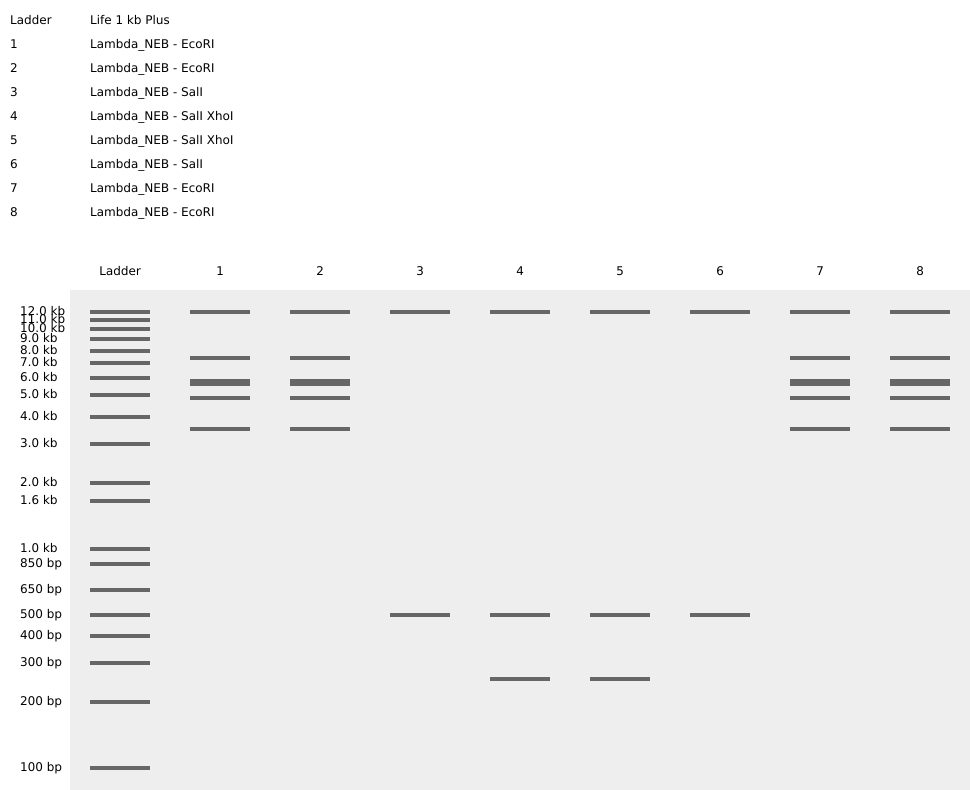

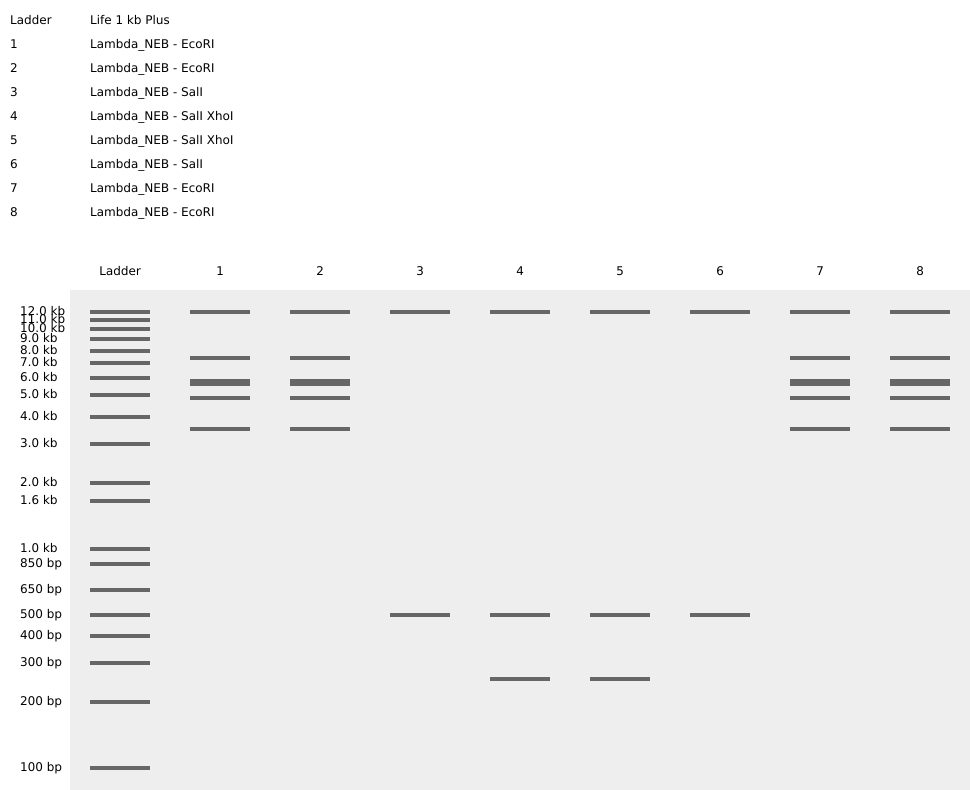

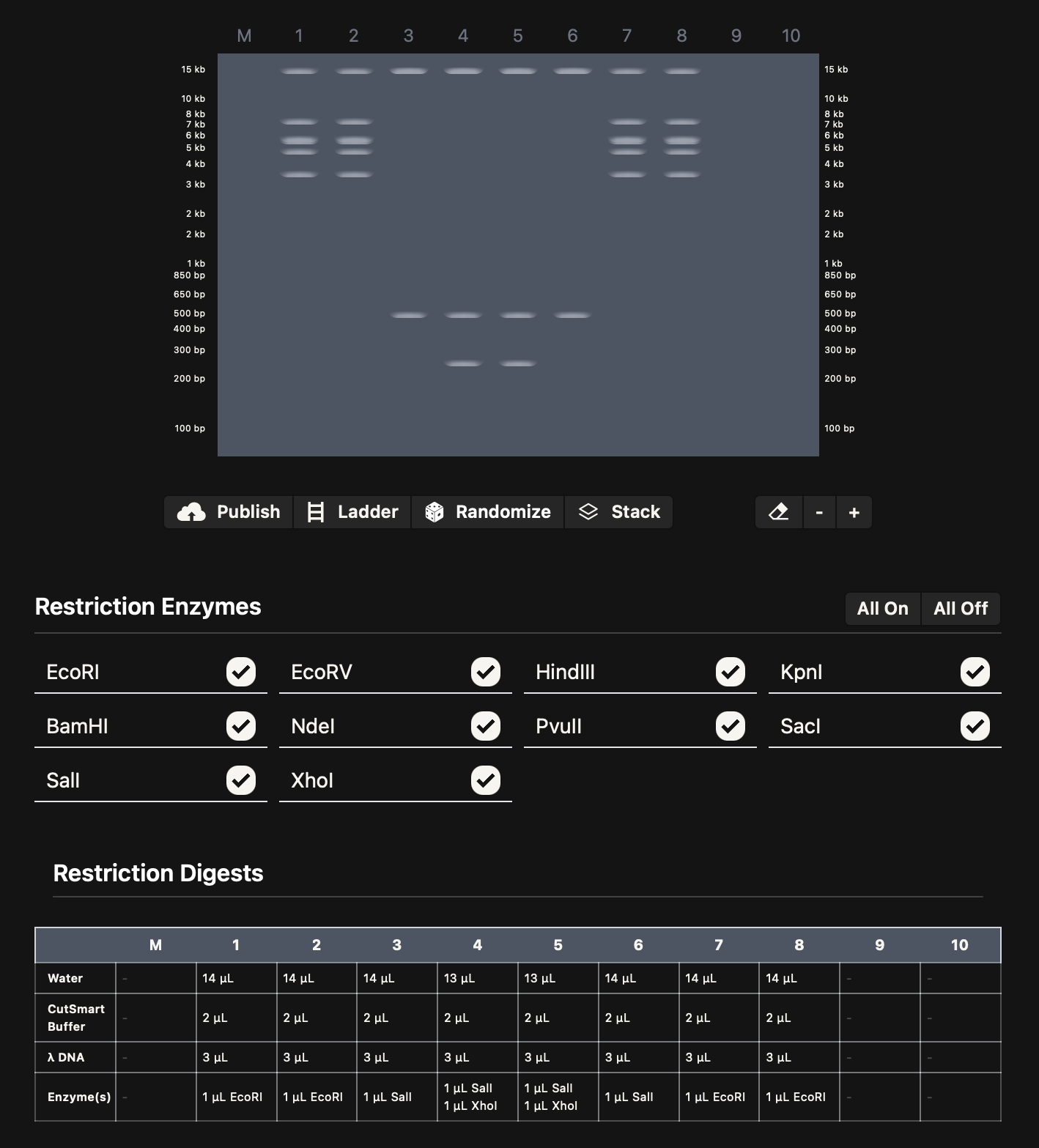

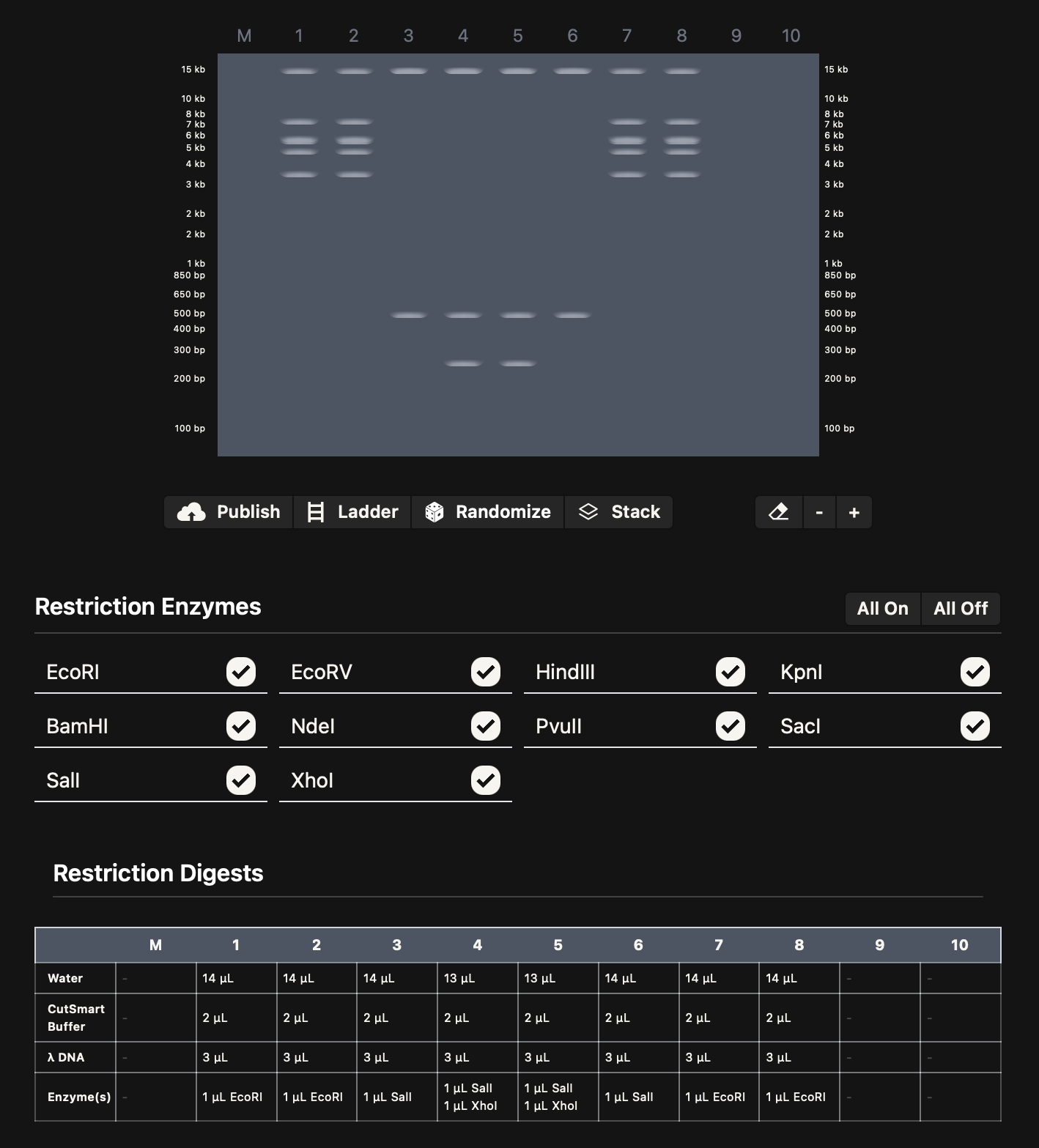

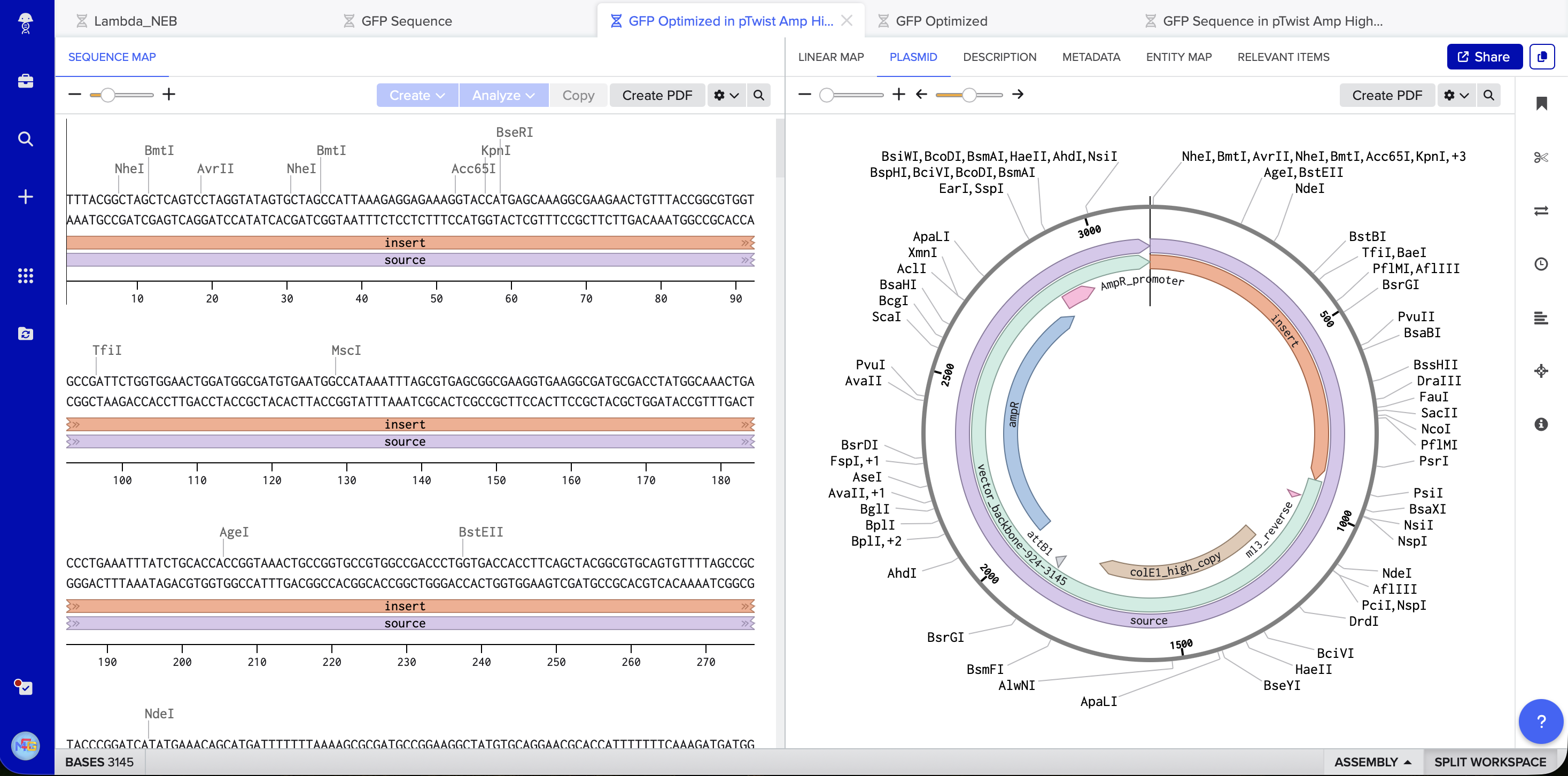

I imported the lambda sequence on Benchling, and started experimenting on Ronan’s website rcdonovan.com until I found a way to make a smiley face. I then used the restriction enzymes on Benchling to get the virtual digest from there. Below are images for my results

Part 3:

The protein of choice for me is green fluorescent protein (GFP), because it is widely used as a reporter in molecular and synthetic biology to visualize gene expression and protein localization. Its function is easy to assay, and it is well-characterized. I got the sequence from Uniprot

Protein sequence:

MSKGEELFTGVVPILVELDGDVNGHKFSVSGEGEGDATYGKLTLKFICTTGKLPVPWPTLVTTFSYGVQCFSRYPDHMKQHDFFKSAMPEGYVQERTIFFKDDGNYKTRAEVKFEGDTLVNRIELKGIDFKEDGNILGHKLEYNYNSHNVYIMADKQKNGIKVNFKIRHNIEDGSVQLADHYQQNTPIGDGPVLLPDNHYLSTQSALSKDPNEKRDHMVLLEFVTAAGITHGMDELYK

Reverse translated DNA sequence:

I got the sequence from Here

atgagcaaaggcgaagaactgtttaccggcgtggtgccgattctggtggaactggatggcgatgtgaacggccataaatttagcgtgagcggcgaaggcgaaggcgatgcgacctatggcaaactgaccctgaaatttatttgcaccaccggcaaactgccggtgccgtggccgaccctggtgaccacctttagctatggcgtgcagtgctttagccgctatccggatcatatgaaacagcatgatttttttaaaagcgcgatgccggaaggctatgtgcaggaacgcaccattttttttaaagatgatggcaactataaaacccgcgcggaagtgaaatttgaaggcgataccctggtgaaccgcattgaactgaaaggcattgattttaaagaagatggcaacattctgggccataaactggaatataactataacagccataacgtgtatattatggcggataaacagaaaaacggcattaaagtgaactttaaaattcgccataacattgaagatggcagcgtgcagctggcggatcattatcagcagaacaccccgattggcgatggcccggtgctgctgccggataaccattatctgagcacccagagcgcgctgagcaaagatccgaacgaaaaacgcgatcatatggtgctgctggaatttgtgaccgcggcgggcattacccatggcatggatgaactgtataaa

3.3 Codon Optimization

Codon optimization is needed because different organisms prefer different codons due to tRNA abundance. Using non-preferred codons can slow translation, reduce protein yield, or cause misfolding.

I optimized the GFP coding sequence for Escherichia coli, since it is commonly used for recombinant protein expression due to its fast growth, low cost, and well-developed genetic tools. Codon optimization increases translation efficiency and protein output in the chosen host.

This is the tool I used for optimization: Vectorbuilder

Optimized sequence:

atgagcaaaggcgaagaactgtttaccggcgtggtgccgattctggtggaactggatggcgatgtgaatggccataaatttagcgtgagcggcgaaggtgaaggcgatgcgacctatggcaaactgaccctgaaatttatctgcaccaccggtaaactgccggtgccgtggccgaccctggtgaccaccttcagctacggcgtgcagtgttttagccgctacccggatcatatgaaacagcatgatttttttaaaagcgcgatgccggaaggctatgtgcaggaacgcaccatttttttcaaagatgatggcaattacaaaacccgtgccgaagtgaaattcgaaggcgataccctggtgaatcgcattgaactgaaaggcattgattttaaagaagatggtaacattctgggccacaaactggaatacaactataacagccataacgtgtacattatggcggataaacagaaaaatggcattaaagtgaactttaaaattcgccataacattgaagatggctcagtgcagctggcggatcactatcagcagaacaccccgattggcgatggcccggttctgctgccggataaccactatctgagcacccagagcgcgctgtcgaaagatccgaacgaaaaacgcgatcacatggtgctgctggaatttgtgaccgccgcgggcatcacccatggtatggatgaactgtataaa

I noticed that the optimized sequence was almost identical to the unoptimized one. This is probably because the GFP is very well known and is already highly optimized for E. choli.

3.4 Producing the Protein

To produce GFP, the optimized DNA sequence can be cloned into an expression vector under a strong promoter. In a cell-dependent system, such as E. coli, the DNA is transcribed into mRNA by RNA polymerase and translated by ribosomes into protein. The GFP protein then folds into its functional fluorescent form.

3.5 Multiple Proteins from One Gene

In natural systems, a single gene can produce multiple proteins through mechanisms such as alternative splicing, alternative promoters, or alternative start codons. These processes allow different mRNA transcripts to be produced from the same DNA sequence, which are then translated into distinct protein isoforms with different functions or localizations.

Part 4:

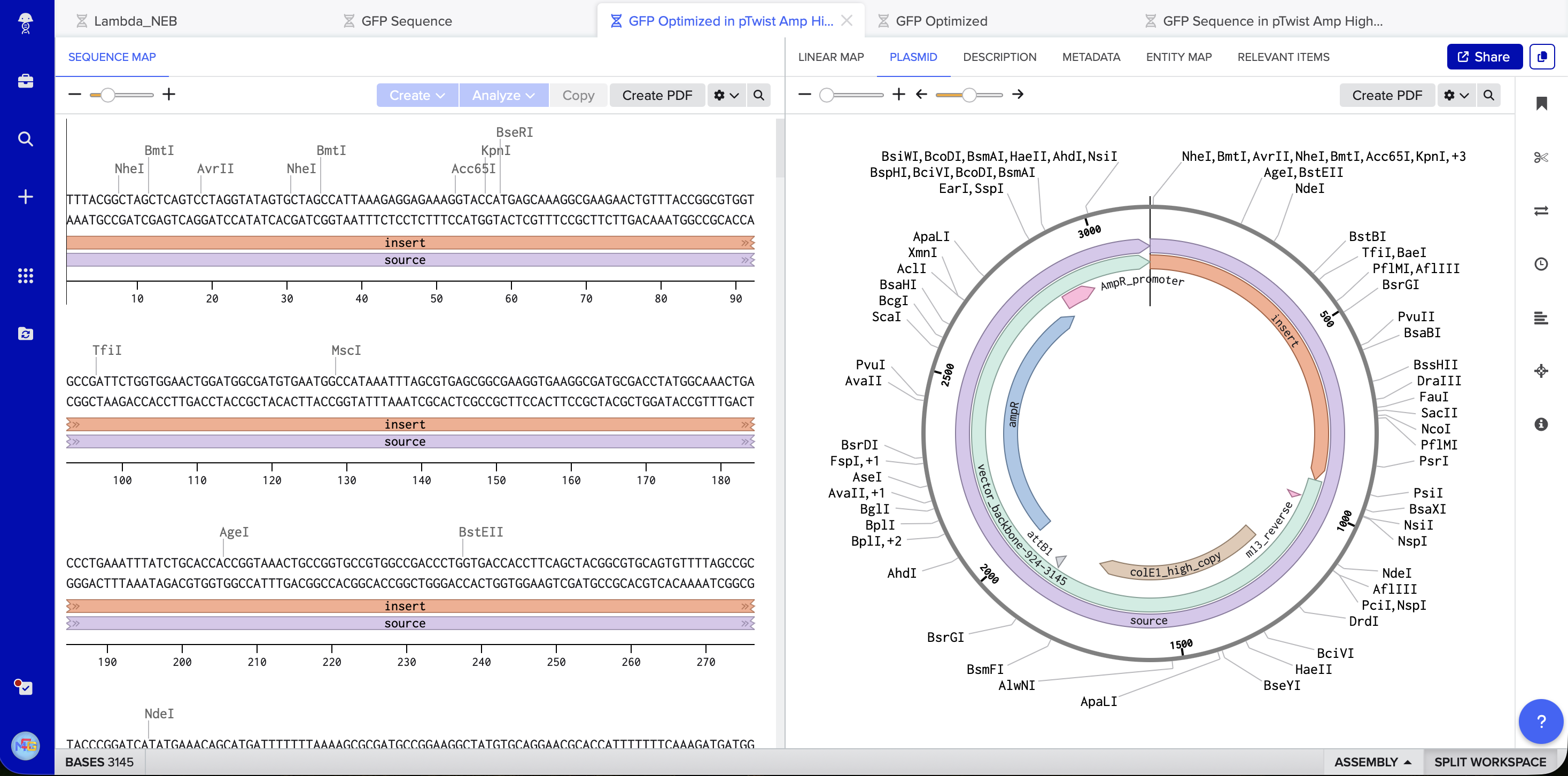

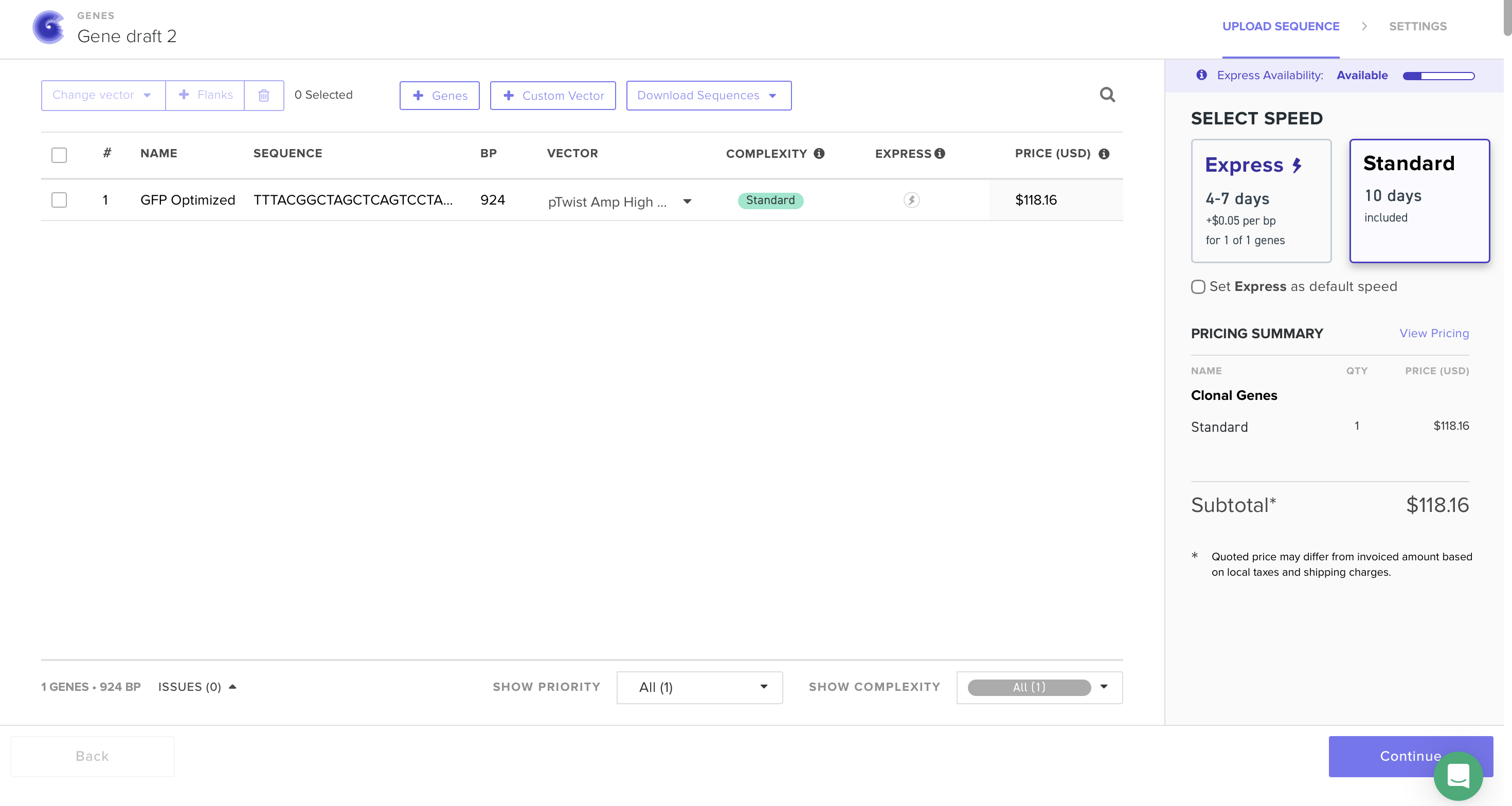

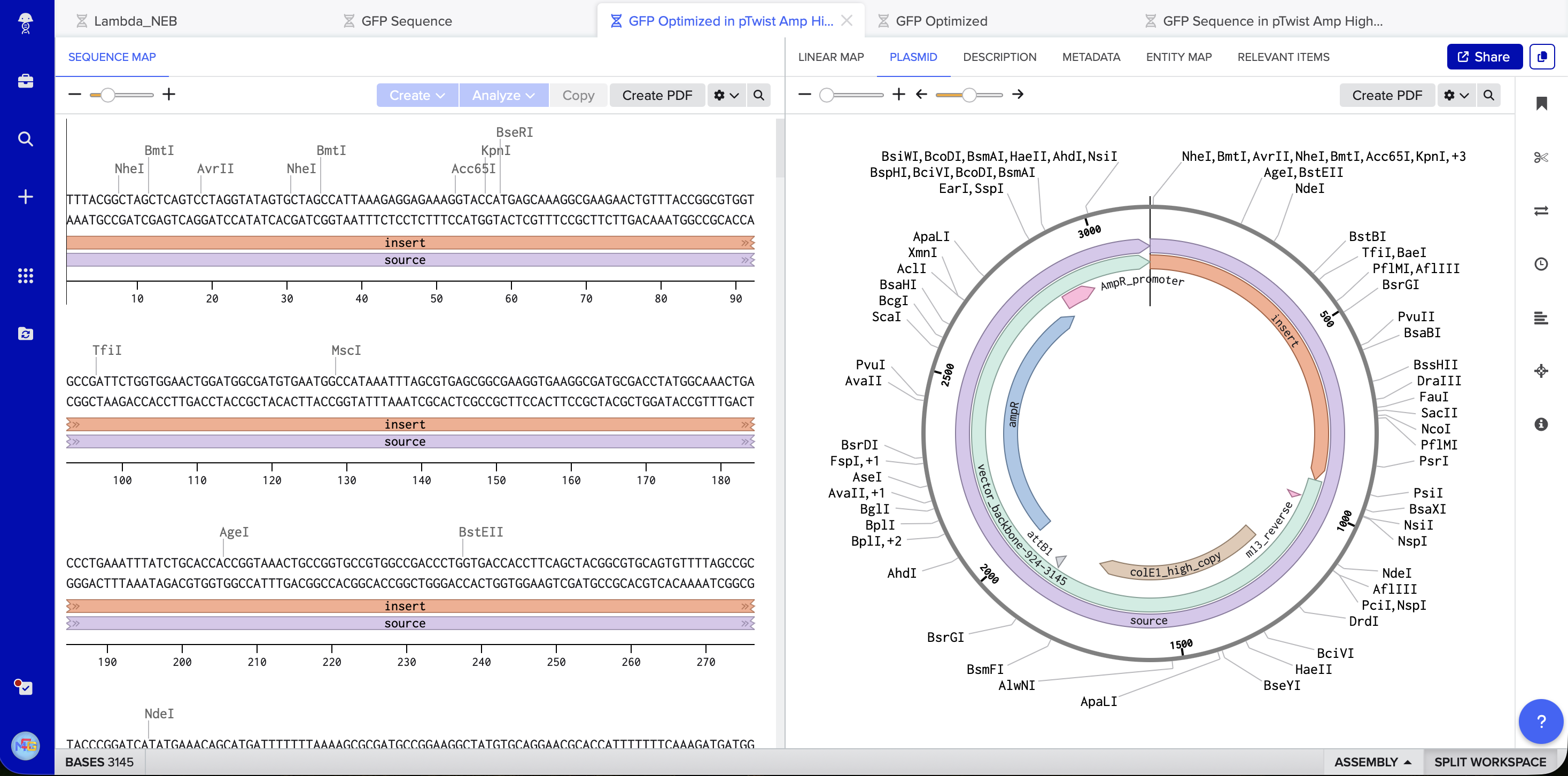

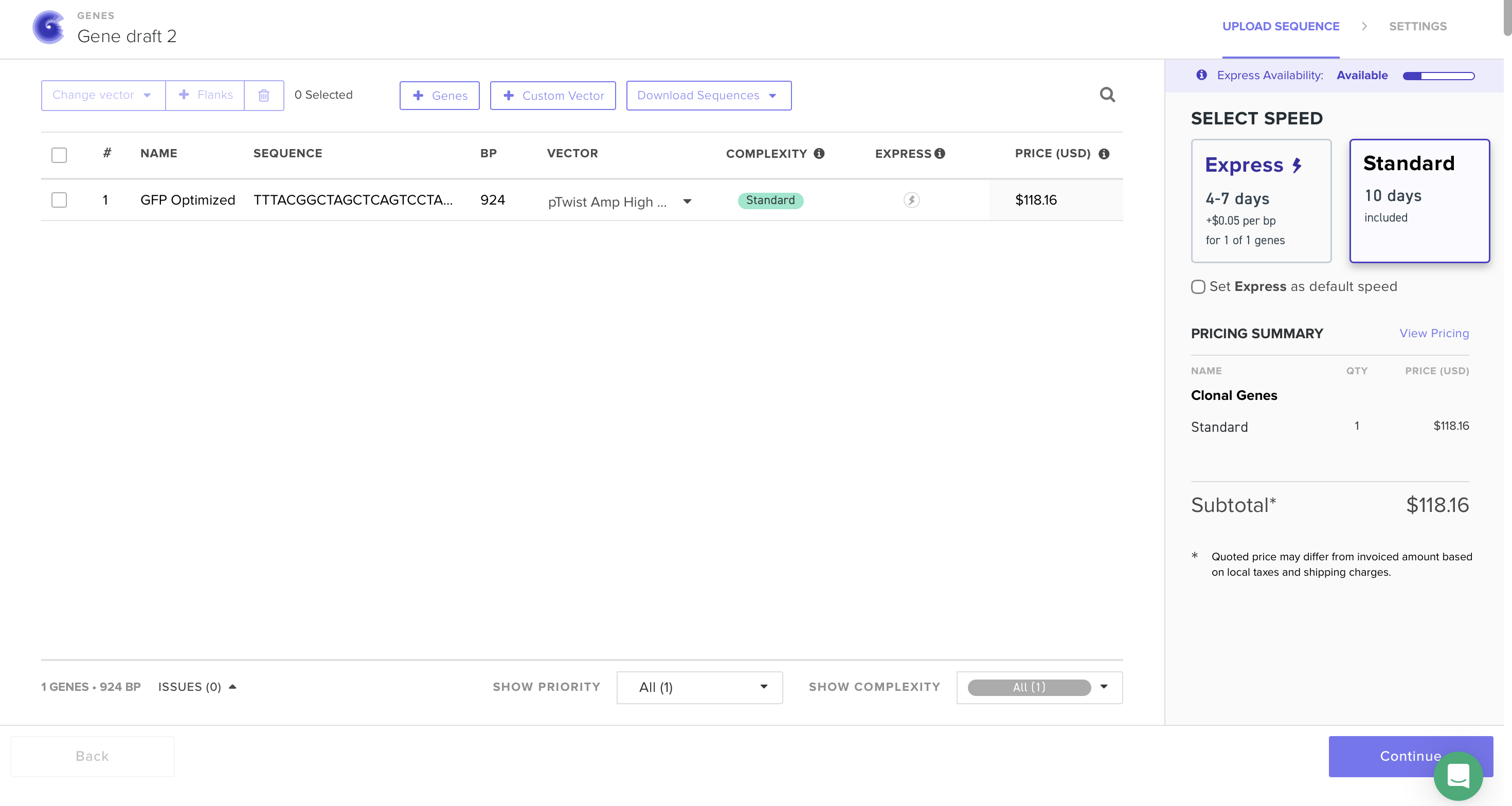

At this point I saw that GFP is used as an example, however I will still continue my work on it because of how much I am interested in its uses and I have already invested time on it. I followed the steps, made TWIST & Benchling accounts, imported the genome to Benchling, added the sequence and annotations for the promoter, RBS, start codon, sequence, HIS tags, stop codon, and terminator. Exported it as FASTA, imported it on TWIST, added the pTwist Amp High Copy vector. Exported it as Genbank, and opened it on Benchling to see the plasmid.

5.1

(i) I would sequence the human BRCA1 gene. It matters because BRCA1 variants strongly link to hereditary breast and ovarian cancer. Reading this DNA lets us detect pathogenic mutations, estimate cancer risk, and guide screening or preventive care. It also helps choose therapy since BRCA1 status affects response to PARP inhibitors.

(ii) I would use Illumina sequencing because it is high accuracy and high throughput which is important for detecting small mutations such as single nucleotide variants or small insertions and deletions.

Illumina sequencing is a second-generation sequencing technology because it sequences millions of DNA fragments simultaneously using amplified clusters on a sequencing flow cell.

The input is genomic DNA extracted from human cells, such as blood or saliva. Preparation steps:

- DNA extraction from the sample.

- Fragmentation of genomic DNA into shorter pieces (usually ~200–500 bp).

- Adapter ligation, where synthetic adapter sequences are attached to the ends of DNA fragments.

- PCR amplification to increase the number of DNA fragments containing the BRCA1 region.

- Loading onto a sequencing flow cell, where fragments bind and form clusters through bridge amplification.

Illumina sequencing uses sequencing-by-synthesis:

- DNA polymerase incorporates nucleotides into the growing DNA strand.

- Each nucleotide (A, T, C, G) has a different fluorescent label.

- After each incorporation, a camera detects the fluorescence signal.

- The signal identifies which base was added at that position.

Repeating this cycle allows the instrument to determine the DNA sequence.

- The output is millions of short DNA reads with associated quality scores, typically stored in FASTQ files. These reads are then aligned to the human reference genome to identify mutations or variants in the BRCA1 gene.

5.2 DNA Write

(i) I would synthesize a genetic circuit that allows bacteria to detect pollution or toxins and produce a fluorescent signal. This could help monitor contamination in environmental samples. Such biosensors could help detect pollution or toxins in water or soil quickly and cheaply.

(ii) I would use solid-phase phosphoramidite DNA synthesis combined with gene assembly (the method used by companies like Twist Bioscience).

Essential steps

- Chemical synthesis of short oligonucleotides (~150–200 nt) using phosphoramidite chemistry.

- Cleavage and purification of the oligos.

- Assembly of longer genes using PCR-based assembly or Gibson Assembly.

- Cloning into plasmid vectors for propagation in bacteria.

Limitations

• Length limits: individual oligos cannot exceed ~200 nt.

• Error accumulation during synthesis.

• Cost and time increase with longer constructs.

• Very large constructs (whole genomes) require complex assembly.

5.3 DNA Edit

(i) I would edit the PCSK9 gene in humans to reduce cholesterol levels and lower the risk of cardiovascular disease. PCSK9 regulates the number of LDL receptors on liver cells. Certain natural mutations that disable PCSK9 lead to lower LDL cholesterol levels and a reduced risk of heart disease. By editing the PCSK9 gene to mimic these naturally occurring protective mutations, it may be possible to create a long-term treatment for high cholesterol.

(ii) I would use CRISPR-Cas9 genome editing because it allows targeted modification of specific genes such as PCSK9.

- How the technology edits DNA

- A guide RNA (gRNA) is designed to match the DNA sequence within the PCSK9 gene.

- The Cas9 enzyme binds to the guide RNA to form a complex.

- The complex finds the matching sequence in the genome.

- Cas9 creates a double-strand break at that location.

- The cell repairs the break through non-homologous end joining (NHEJ), which can disrupt the gene and reduce PCSK9 activity.

Reducing PCSK9 function increases the number of LDL receptors on liver cells, helping remove LDL cholesterol from the bloodstream.

- Preparation and inputs

To perform the edit, several components are required:

• A guide RNA targeting the PCSK9 gene

• The Cas9 enzyme or a plasmid encoding Cas9

• A delivery system such as lipid nanoparticles or viral vectors

• Target cells, typically liver cells where PCSK9 is expressed

The guide RNA must be carefully designed to ensure specificity for the PCSK9 gene.

- Limitations

There are several limitations to this editing approach:

• Off-target edits may occur at similar DNA sequences elsewhere in the genome

• Editing efficiency may vary depending on the cell type and delivery method

• Delivery challenges, especially when targeting specific tissues in the body

• Ethical and regulatory considerations related to editing human genes

Despite these limitations, editing PCSK9 is considered a promising approach for treating high cholesterol and preventing cardiovascular disease.

Sources used for homework:

Google searches and slides for Lecture 2