Week 1 HW: Principles and Practices

Table of Contents

- 1) Biological engineering application / tool

- 2) Governance / policy goals

- 3) Governance actions

- 4) Risk analysis

- 5) Scoring governance actions

- 6) Prioritization recommendation

- 7) Reflection

- 8) Project + governance overview

- Homework Questions from Professor Jacobson

- Homework Questions from Dr. LeProust

- Homework Question from George Church

1) Biological engineering application / tool I want to develop (and why)

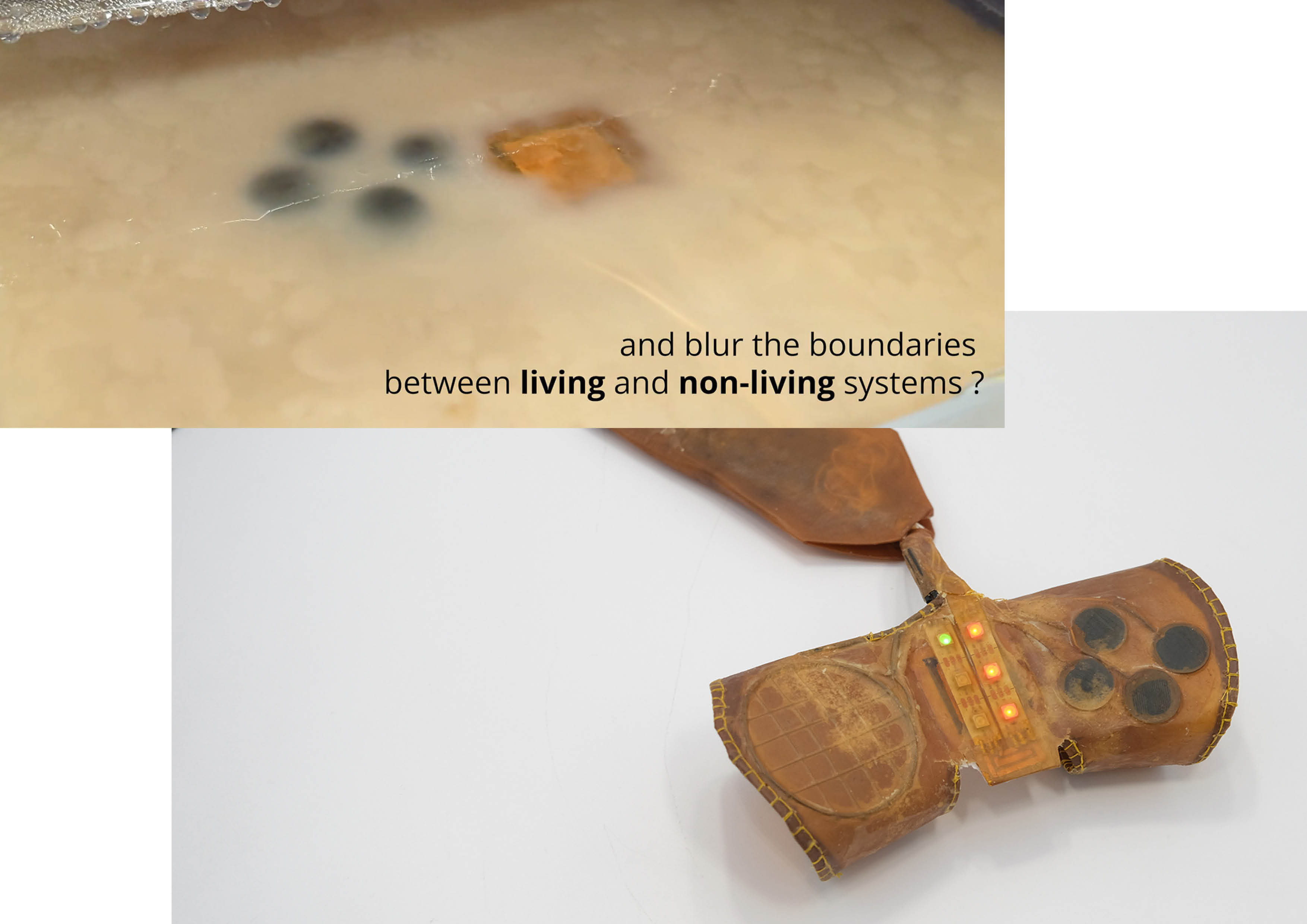

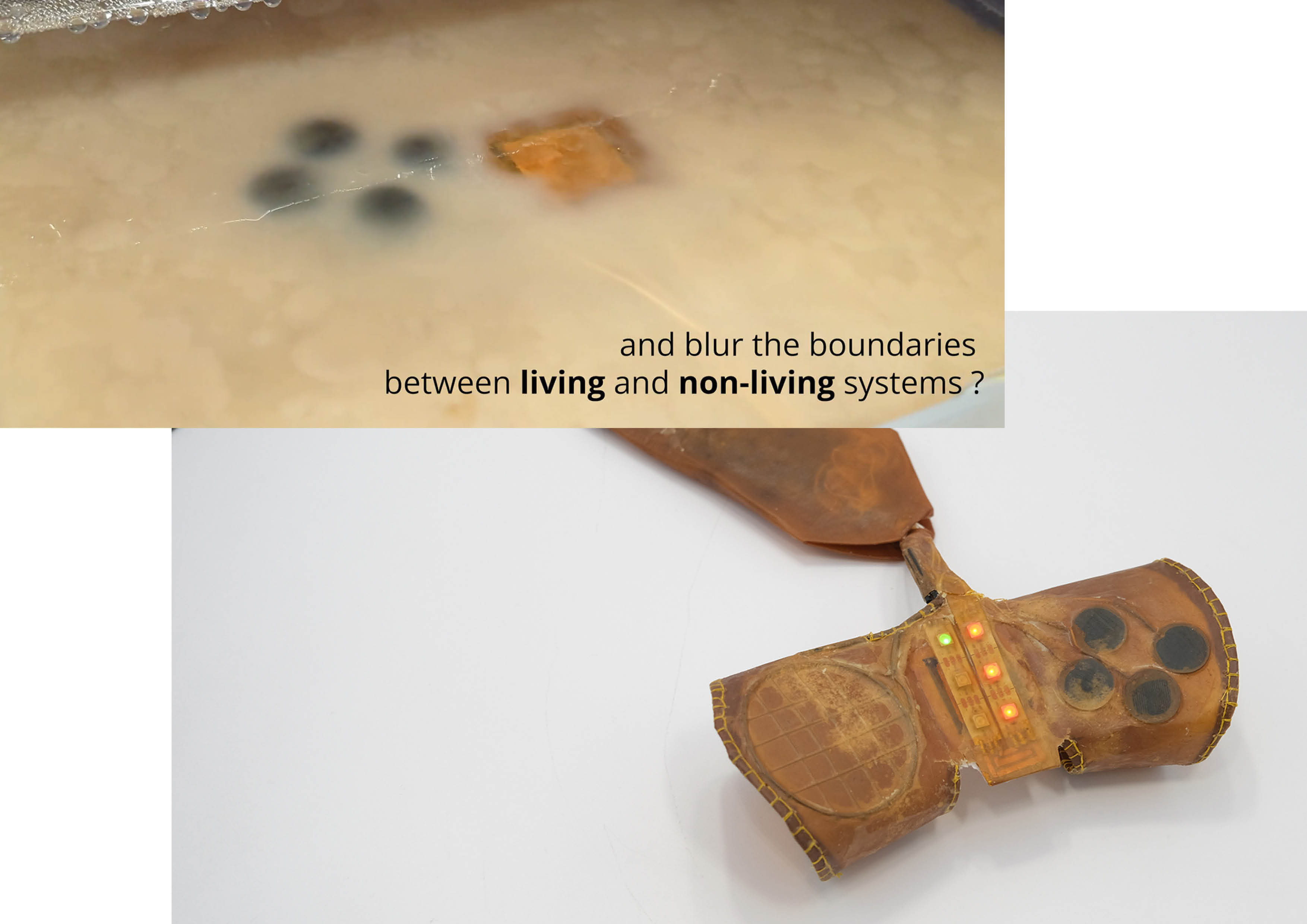

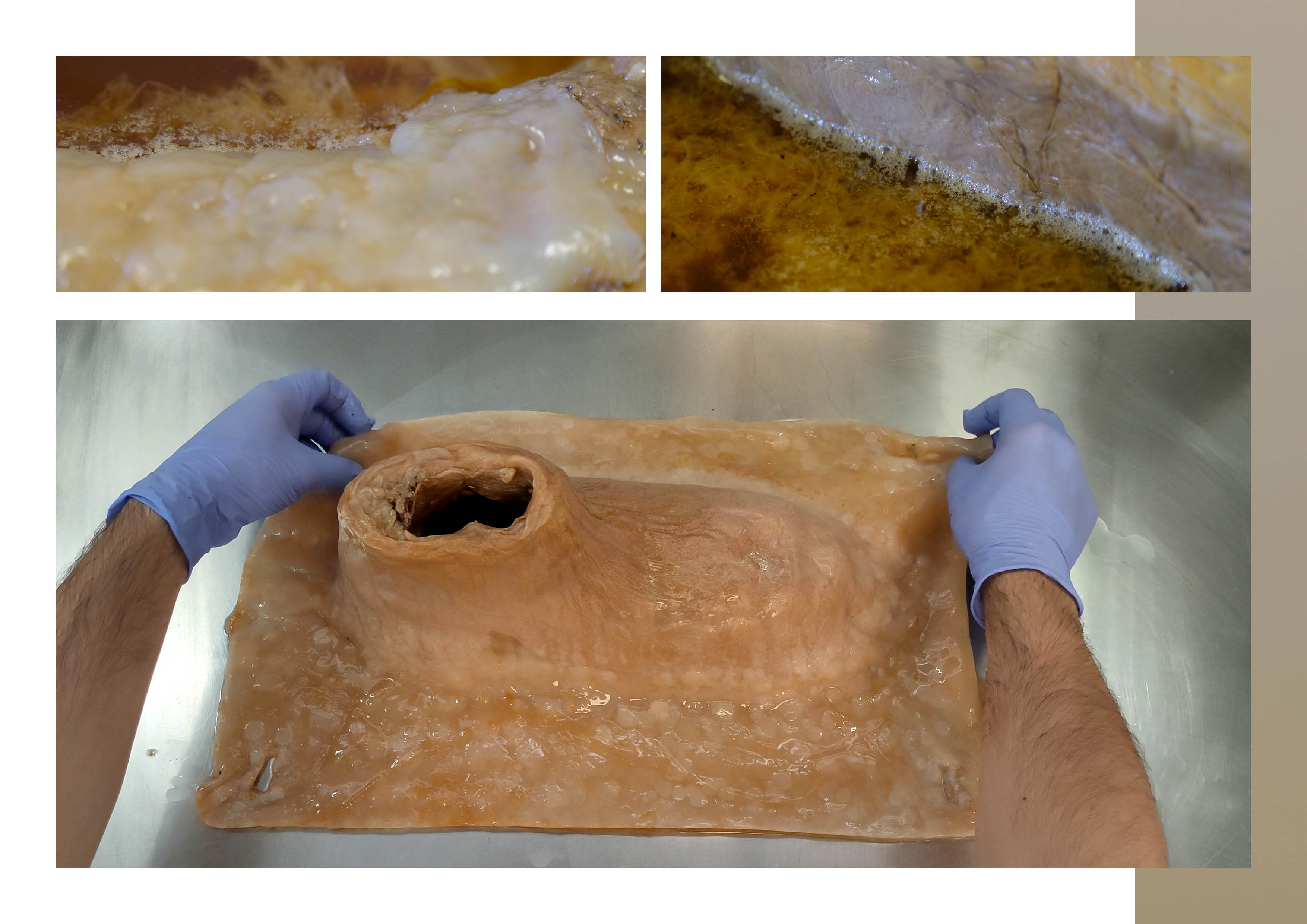

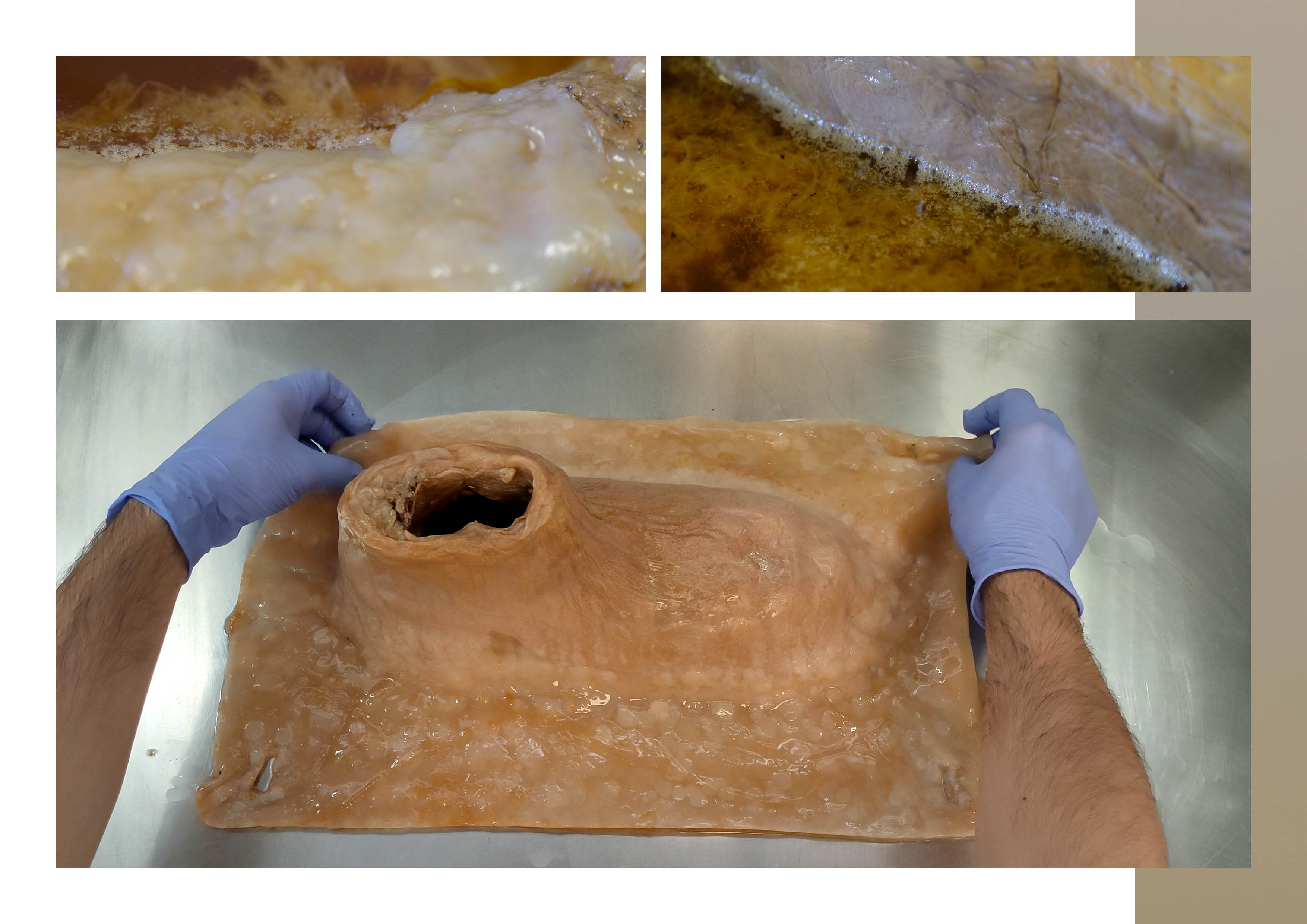

Application / tool: Growing interactive surfaces from bacterial cellulose

I already know how to grow 3D artifacts in bacterial cellulose (BC). In this project, I want to develop a biological functionalization workflow that turns grown BC artifacts into interactive surfaces, measured through impedance-based sensing (e.g., tactile volumetric response, sensitivity to pressure), with minimal embedded electronics.

The goal is not to integrate electronic components into the object, but to make the material itself behave as an interface. Electrodes are used only for readout and characterization, not as active elements.

Concretely, this toolchain would include:

Biological functionalization workflow

Modulating the extracellular matrix during growth (for example through protein secretion into the BC network) to tune impedance-based electrical behavior and enable material-level interaction.Measurement interface (readout only)

A simple electrode setup and impedance readout used to validate, compare, and characterize material responses across different growth and functionalization conditions.Optional integration (if time allows)

Integrating functionalization into my existing 3D growth workflow (molds, scaffolds, and growth conditions), so objects can be grown already functionalized rather than treated after fabrication.

Why this matters

Most interactive devices are built by assembling electronics into synthetic materials. This project proposes a different paradigm:

Instead of fabricating objects and then adding interactivity, objects are cultivated so that interactivity emerges from biological growth and organization.

This matters for several reasons:

- Sustainable interfaces: biodegradable material base, low-energy fabrication, and the possibility of metabolic degradation or recycling.

- New interface paradigms: artifacts become processual and time-based, where growth history and material state shape interaction.

- Research tool: impedance acts as a lens to study how morphology, hydration, and growth dynamics become measurable signals.

2) Governance / policy goals for an ethical future

Inspired by the iGEM Safety framework, the ethical challenges of this project are framed around biosafety, environmental responsibility, and responsible use of engineered living materials. Because this work lowers the barrier to growing functional living artifacts, governance should guide not only what is built, but how materials are grown, handled, and disposed of.

Goal A — Ensure biosafety and non-malfeasance during cultivation and use

Although bacterial cellulose–producing organisms are generally considered low-risk, improper handling or uncontrolled experimentation could lead to contamination or unintended exposure.

Sub-goals

- A1. Safe handling and containment

Ensure cultivation, functionalization, and testing are conducted using appropriate hygiene, containment, and separation practices, especially when combining multiple biological agents. - A2. Transparency about biological modification

Maintain a clear distinction between engineered biological components and non-biological elements (e.g., electrodes used only for readout).

Goal B — Prevent environmental harm and unintended release

A key motivation of this work is sustainability: growing interactive surfaces from living matter that can eventually be degraded or recycled. This benefit only holds if environmental risks are managed.

Sub-goals

- B1. Controlled disposal and deactivation Ensure BC artifacts, growth media, and byproducts are deactivated or degraded before disposal, preventing accidental release into wastewater or compost systems.

- B2. Avoid persistent or toxic additives Favor biological or biodegradable functionalization methods over persistent chemicals or heavy metals to preserve viable end-of-life pathways.

Goal C — Promote responsible development of living interactive interfaces

Because this project treats living materials as sensing and computational substrates, governance should guide how such systems are framed and used, particularly outside the lab.

Sub-goals

- C1. Prevent misleading claims

Avoid presenting biologically functionalized materials as autonomous, intelligent, or fully controllable systems. - C2. Limit sensing to material interaction

Encourage applications focused on material state (touch, pressure, hydration).

3) Governance actions (comparative overview)

The table below summarizes three governance actions addressing biosafety, environmental impact, and responsible use of grown bacterial cellulose artifacts. Each action is evaluated across its purpose, design, assumptions, and potential risks.

| Governance Action | Purpose (What changes?) | Design (How it works / Who acts?) | Key Assumptions | Risks of Failure & “Success” |

|---|---|---|---|---|

| Option 1 — Biological process & provenance documentation | Current documentation emphasizes performance over biological process. This action requires explicit documentation of organism source, biological modification, and separation between biological and technical components. | • Actors: instructors, research labs, journals, funding agencies • Lightweight 1–2 page template integrated into coursework or reports • Descriptive, not approval-based | Transparency reduces misuse, confusion, and overclaiming, and improves accountability if issues arise. | Failure: becomes a checkbox exercise with little reflection. Success risk: excessive formalization discourages exploratory research. Mitigation: keep requirements descriptive and allow uncertainty. |

| Option 2 — Standardized deactivation & disposal protocols | Living materials are often treated as benign, leading to inconsistent disposal. This action establishes mandatory deactivation and disposal procedures for living artifacts and growth media. | • Actors: lab managers, EHS offices, instructors • Clear SOPs (heat, chemical, drying) • Labeled waste streams and visible instructions | Improper disposal is the most likely real-world risk, especially as production scales or decentralizes. | Failure: users bypass protocols due to inconvenience or lack of enforcement. Success risk: overly strict rules push work into informal or unregulated spaces. Mitigation: make compliance easy and well-supported. |

| Option 3 — Readout-limited interface design norms | Biological sensing systems can drift toward surveillance or control narratives. This action promotes norms framing living materials as readout-based material interfaces, not monitoring systems. | • Actors: researchers, designers, companies • Explicit framing in documentation and demos • Focus on local, material-state signals | Early design norms can influence long-term application trajectories and public expectations. | Failure: norms ignored in commercial or extractive contexts. Success risk: may limit some beneficial applications. Mitigation: scope norms to public, non-clinical, and creative deployments. |

4) Potential risks, use, misuse, and governance considerations

| Concern Category | Plausible Worst-Case Scenario | Why This Matters | Governance Focus |

|---|---|---|---|

| Biosecurity | Engineered bacteria persist outside intended settings | BC artifacts are wet and long-lived | Provenance + deactivation |

| Lab & user safety | Artifacts become contamination reservoirs | Objects may be touched or deployed publicly | Hygiene + stabilization |

| Environmental impact | Degradation causes microbial blooms | Biodegradable ≠ neutral at scale | Disposal pathways |

| Waste & end-of-life | Functionalization alters compost systems | Degradation is context-dependent | Additive disclosure |

| Misuse of sensing | Interfaces repurposed for monitoring | Ambiguous signals enable misuse | Readout limits |

| Overclaiming | Materials framed as autonomous | False trust and adoption | Transparency |

| Decentralized production | DIY use without safeguards | Escapes institutional oversight | Education-first governance |

5) Scoring governance actions

Scoring: 1 = best, 2 = moderate, 3 = weak

| Policy Goal / Criterion | Option 1 | Option 2 | Option 3 |

|---|---|---|---|

| Prevent biosecurity incidents | 2 | 1 | 3 |

| Respond to incidents | 1 | 2 | 3 |

| Prevent lab safety incidents | 2 | 1 | 3 |

| Protect environment | 2 | 1 | 2 |

| Minimize burden | 1 | 2 | 1 |

| Feasibility | 1 | 2 | 2 |

| Not impede research | 1 | 2 | 2 |

| Promote constructive use | 1 | 2 | 2 |

6) Prioritization and recommendation

Priority order

- Option 2 — Deactivation & disposal protocols

- Option 1 — Provenance documentation

- Option 3 — Readout-limited norms

Option 2 addresses the most immediate and likely harm: environmental release and waste. Option 1 supports accountability and response without limiting research. Option 3 addresses longer-term risks if the technology scales.

7) Reflection

This exercise highlighted that the main risks of growing interactive living artifacts arise from success and scale, not malicious intent. Even biodegradable materials can become harmful when produced or discarded in large quantities. Another key insight is how easily biological sensing can be reframed beyond material interaction.

Governance should therefore focus on practice, documentation, and lifecycle management, rather than strict prohibition.

At this stage, the cultivation of interactive BC artifacts should remain confined to controlled research and educational contexts, and not be deployed in public or commercial environments without additional review.

8) Project + governance overview

mindmap

root((BC Interactive Artifacts))

Growth

3D molds

Functionalized matrix

Interaction

Impedance

Tactile response

Risks

Biosecurity

Waste

Misuse

Governance

Disposal protocols

Provenance docs

Readout limitsStrategies to ensure an ethical biological future (project-integrated)

The table below summarizes the concrete strategies embedded in my final project to ensure it contributes to an ethical biological future. Rather than external rules, these strategies are implemented through design choices, constraints, and documentation practices.

| Strategy | Ethical Principle | What Risk It Addresses | How It Is Implemented in the Project | Why This Matters |

|---|---|---|---|---|

| Readout-first interface design | Observation over control | Instrumentalization of living systems; drift toward surveillance or behavioral monitoring | Electronics are used only for impedance readout; no actuation or feedback loops acting on the living material; signals framed as material-state indicators | Limits coercive or extractive uses of living matter and preserves biological agency |

| Scale and context limitation | Responsibility through constraint | Environmental harm and biosafety risks arising from success and scale | Project framed as research/educational tool; growth and testing limited to controlled lab or studio environments; public or commercial deployment explicitly excluded without further review | Prevents premature deployment and unmanaged scaling of living interfaces |

| Lifecycle-aware design | End-of-life accountability | Ecological disruption from disposal or accumulation of living materials | Preference for biologically degradable functionalization; explicit deactivation and disposal protocols included in the workflow | Sustainability is addressed across the full material lifecycle, not only fabrication |

| Transparency about biological modification | Epistemic responsibility | Overclaiming, black-box narratives, and misleading representations of “living intelligence” | Clear distinction between engineered biological processes and technical readout systems; documentation of uncertainty and variability | Builds trust and prevents misuse driven by misunderstanding or hype |

| Reflexive governance practice | Ethics as an evolving process | Static rules becoming obsolete as techniques evolve | Periodic reassessment of risks; use of lightweight governance tools (documentation, norms, constraints) rather than fixed prohibitions | Allows ethical considerations to evolve alongside the project and its capabilities |

Homework Questions from Professor Jacobson:

Question 1 — Polymerase error rate, genome size, and biological error correction

DNA polymerase, the molecular machinery responsible for copying DNA, has a non-zero error rate. As presented in the lecture slides, the error rate of an error-correcting DNA polymerase is approximately 1 error per 10⁶ base incorporations. While this error rate is very low at the scale of individual nucleotides, it becomes significant when compared to the size of the human genome, which is on the order of 3.2 × 10⁹ base pairs. If replication relied solely on polymerase accuracy, this discrepancy would imply the accumulation of thousands of errors during each complete genome replication, which would be incompatible with stable inheritance and organismal viability.

Biology resolves this apparent mismatch through multiple layers of error correction rather than relying on perfect synthesis. First, many DNA polymerases include proofreading activity (3′→5′ exonuclease) that detects and removes incorrectly incorporated bases during replication. Second, additional post-replication repair systems, such as mismatch repair pathways (e.g., the MutS system shown in the slides), identify and correct errors that escape polymerase proofreading. Together, these layered mechanisms dramatically reduce the effective error rate of DNA replication, allowing large genomes like the human genome to be copied reliably despite the intrinsic imperfection of polymerase activity.

Question 2 — Coding redundancy and why most theoretical DNA codes do not work in practice

Due to the redundancy of the genetic code, an average human protein can, in theory, be encoded by an extremely large number of different DNA sequences. As shown in the slides, an average human protein is approximately 1036 base pairs long, corresponding to roughly 345 amino acids. Because many amino acids can be encoded by multiple synonymous codons, the number of possible nucleotide sequences that map to the same amino acid sequence grows combinatorially. In principle, this means there are astronomically many distinct DNA sequences that could encode the same protein sequence.

In practice, however, most of these theoretically valid codes do not function effectively in living systems. The lecture slides highlight several biological constraints that limit which sequences work. Certain DNA or RNA sequences form secondary structures with unfavorable free energy that interfere with transcription or translation. GC content bias can destabilize sequences or alter expression efficiency. Additionally, synthesis and assembly processes have their own error profiles, making some sequences more fragile than others. Finally, translation depends on interactions with cellular machinery such as ribosomes and tRNA pools, meaning that codon usage and sequence context matter beyond simple amino acid encoding. As a result, although the genetic code is redundant in theory, only a narrow subset of possible DNA sequences reliably produce functional proteins in practice.

Homework Questions from Dr. LeProust:

1. What’s the most commonly used method for oligo synthesis currently?

The most commonly used method for oligonucleotide synthesis today is solid-phase phosphoramidite chemical synthesis, originally developed by Caruthers in the early 1980s and still the industry standard. In this approach, DNA is synthesized base by base on a solid support (typically controlled pore glass or functionalized silica). Each synthesis cycle consists of four repeated chemical steps: coupling of a phosphoramidite nucleotide, capping of unreacted chains, oxidation to stabilize the backbone, and deprotection (deblocking) to expose the next reactive site. This cycle is repeated sequentially to build the desired oligo.

This method dominates because it is highly automatable, scalable, and compatible with parallelization, especially in modern platforms such as silicon-based microarrays (e.g., Twist Bioscience). However, it is fundamentally a stepwise chemical process, meaning that each added nucleotide has a non-zero failure rate. Even with very high per-step efficiencies (>99.5%), errors accumulate with length, which directly constrains how long oligos can be synthesized reliably. This intrinsic accumulation of errors explains many of the downstream limitations discussed in the following questions.

2. Why is it difficult to make oligos longer than ~200 nt via direct synthesis?

The primary difficulty in synthesizing oligos longer than ~200 nucleotides arises from the cumulative error rate of stepwise chemical synthesis. Each coupling step has a small probability of failure (incomplete coupling, side reactions, or truncation). While a single error is unlikely at short lengths, the probability of obtaining a full-length, error-free molecule drops exponentially as the number of synthesis cycles increases. Beyond ~200 nt, the fraction of correct full-length molecules becomes very low, even if average synthesis efficiency remains high.

In addition to error accumulation, sequence-dependent effects further complicate long oligo synthesis. Regions with high or low GC content, homopolymers, inverted repeats, or strong secondary structures (e.g., hairpins) reduce coupling efficiency and increase truncation or deletion events. Purification also becomes more challenging: separating full-length products from truncated sequences is increasingly inefficient as length grows. As a result, while advances in chemistry have pushed this limit somewhat (e.g., validated synthesis of ~300–500 nt in controlled contexts), ~200 nt remains the practical and economical upper bound for routine, high-fidelity direct synthesis.

3. Why can’t you make a 2000 bp gene via direct oligo synthesis?

A 2000 bp gene cannot be synthesized directly because chemical oligo synthesis does not scale linearly with length. At that size, the compounded error rate would make the probability of producing even a single correct full-length molecule essentially negligible. Even if synthesis chemistry allowed chain extension to 2000 nt, the resulting product pool would be dominated by truncated, mutated, or rearranged sequences, rendering it unusable without extensive correction.

Instead, long genes are produced through a hierarchical assembly strategy: shorter oligos (typically 40–200 nt) are first synthesized, then enzymatically assembled using methods such as PCR-based gene assembly or ligation. These assembled fragments are subsequently cloned and sequence-verified, allowing error correction through selection rather than chemistry. This separation of concerns—chemical synthesis for short, precise building blocks, and biological processes for long-range assembly and error correction—is fundamental. It reflects a broader principle emphasized in the course: chemistry writes locally, biology validates globally. Direct chemical synthesis alone cannot replace this division of labor for long DNA constructs.

Homework Question from George Church:

Given the examples in slides #2 and #4, where biological systems use structured “codes” to mediate interactions between polymers (NA:NA via base pairing and AA:NA via translation and binding domains), an AA:AA interaction code would most plausibly rely on side-chain chemistry and spatial complementarity rather than a discrete symbolic alphabet.

Unlike nucleic acids, amino acids do not pair through a uniform geometry or hydrogen-bonding scheme. Instead, proteins interact through combinations of hydrophobicity, charge, polarity, aromatic stacking, and steric fit. Therefore, an AA:AA code is inherently analog and multidimensional, not digital. A reasonable “code” would be based on classes of side-chain interactions—for example, positively charged residues pairing with negatively charged ones, hydrophobic patches aligning with hydrophobic patches, or aromatic residues engaging in π–π stacking. This resembles a physicochemical code rather than a base-pair code.

In practice, such a code already exists implicitly in protein–protein interaction domains, coiled-coil motifs, antibody–antigen interfaces, and self-assembling protein systems. From a design perspective, an explicit AA:AA code could be engineered by constraining proteins to limited alphabets or repeat motifs, where interaction specificity emerges from repeating patterns of side-chain chemistry and geometry. This mirrors how TALE proteins use a simple amino-acid pair code to recognize DNA, but adapted to protein–protein recognition. Thus, AA:AA coding is best understood not as a lookup table, but as a designed interaction grammar grounded in chemistry and structure.

PROMPT :

you can found here the slides of Reading & Writing Life from Goerge Church. Normally I’ve to answers to one of this 2 questions (only one of those not the both) : A. [Using Google & Prof. Church’s slide #4] What are the 10 essential amino acids in all animals and how does this affect your view of the “Lysine Contingency”? B. [Given slides #2 & 4 (AA:NA and NA:NA codes)] What code would you suggest for AA:AA interactions?

So, I don’t know which one is easiest to answer at the moment. We will use the PDF to determine which questions are most likely to be contained within it and reduce the uncertainty. To do this, we will

- Break down the problem into sub-elements.

- Treat each of them with an explicit confidence level (0.0 - 1.0).

- Verify by checking the logic, facts, completeness, possible biases, what could be done, and how to achieve the objective (determine which question is easiest to answer without being wrong and give the answer).

- Synthesize and combine using weighted confidence levels.

- If confidence is below 0.8, identify weaknesses and how we could proceed to achieve a better level. Formulate clear answers, the level of confidence, and key points for vigilance.

REFERENCES